On March 24, 2026, attackers reportedly slipped malicious code into two versions of LiteLLM—a Python library and API gateway that sits inside millions of enterprise AI stacks. Organizations that didn’t know LiteLLM was running in their environment had no way of knowing they were exposed.

That last point is a recurring problem. Supply chain attacks are inevitable, yet most organizations lack the controls to catch them. Gartner® puts a number on it: Only 15% of IT leaders strongly believe they have the right governance models in place to manage AI agents in their enterprise applications, while 84% believe they need additional technical controls to mitigate risk.

“Only 15% of IT leaders strongly believe they have the right governance models in place to manage AI agents in their enterprise applications, while 84% believe they need additional technical controls to mitigate risk.”

Though the Gartner research focuses on embedded AI in enterprise applications, we see the same issue in open-source environments where custom enterprise AI gets built.

Gartner has identified seven AI-specific trust, security and risk management controls, or AI TRiSM, that organizations need to enforce AI governance. We believe each control maps to an important question that IT leaders and executive decision-makers are already asking.

Can you answer “yes” to all of them?

1. Do you know every AI tool running in your environment?

You already have a list of your approved AI tools. But do you also have a list of the models your developers pulled from Hugging Face last month? What about the LLM wrapper your software engineers installed to prototype an internal chatbot, or the transitive dependency that came along for the ride?

If you don’t, you’re not alone: 62% of security practitioners say they have no visibility into where LLMs are in use across their organization. And 75% say they see the writing on the wall: Shadow AI will eclipse the risks once caused by shadow IT.

To get the visibility your IT leaders expect, you need a centralized view into every model in your environment—what it is, who approved it, what its security posture looks like, and whether its licensing terms match how it’s being used. A curated model catalog gives you a governed source of approved models so your teams have a sanctioned path to the tools they need.

When building or evaluating your model catalog, be sure each model comes with an AI bill of materials. AIBOMs give you a component-level inventory of models’ dependencies, origin, training history, and known vulnerabilities so you have a clear risk profile for each.

2. Are your AI policies actually being enforced?

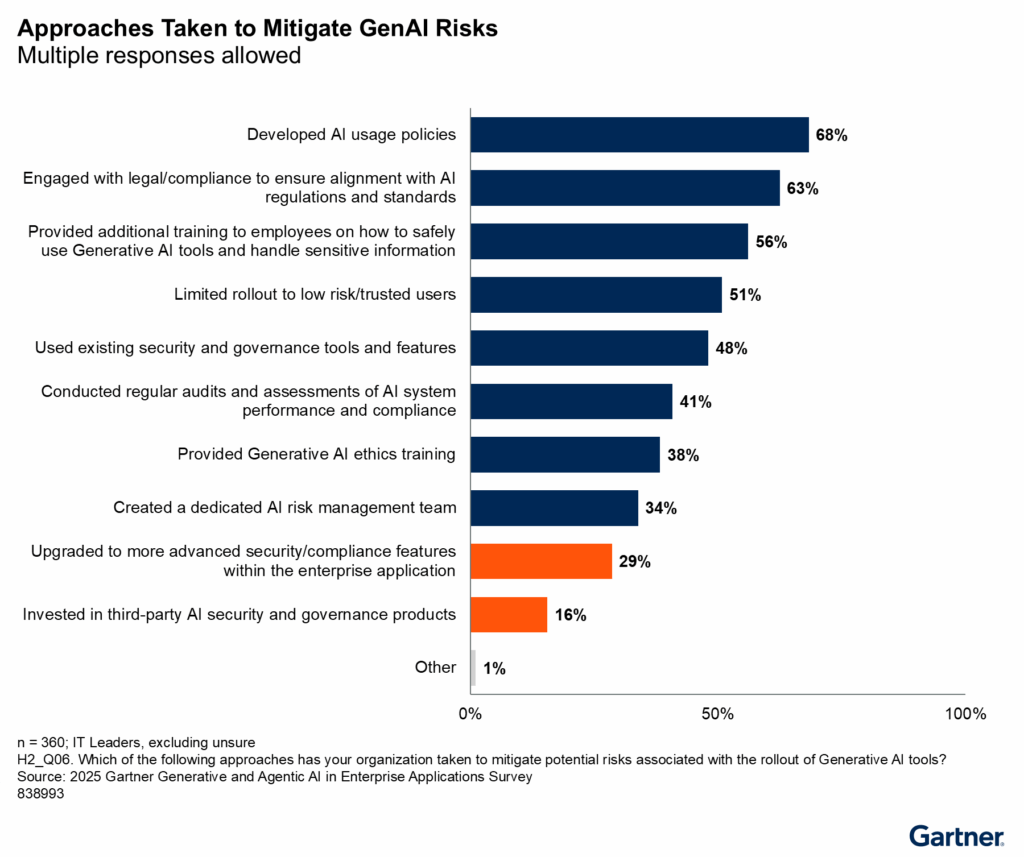

According to Gartner®, 68% of IT leaders have developed AI usage policies. But only 16% are investing in dedicated AI security and governance products.

That’s a big problem, considering AI risk materializes at runtime. How can you be certain that your policies are working without the infrastructure to verify it?

With runtime enforcement, you can. Automated policy controls evaluate AI interactions in real time, and they flag or block any that fall outside your organization’s defined boundaries. That means your IT leadership or AI governance committee can set the rules, and they’ll automatically be enforced.

3. Can you prove your data never leaves your environment?

Your AI usage policy almost certainly says “don’t share company data with unapproved tools,” but you can’t measure what you can’t see. A 2025 report found that 77% of employees paste data into GenAI tools, and 82% of it comes from unmanaged accounts (e.g., accounts that have no enterprise oversight).

For now, there isn’t a foolproof solution. Preventing data leakage is as much a behavioral problem as it is an infrastructure one. But standardizing your deployment architecture is the most direct lever you have. When models run on infrastructure your organization controls, such as a virtual private cloud (VPC) or on-premises environment, your data doesn’t transit third-party systems at all.

For organizations operating AI systems that fall under the EU AI Act’s high-risk classification, August 2026 is the deadline to have data governance and oversight controls in place.

4. Would you know if one of your AI agents was compromised?

Gartner® describes this risk with an example: An AI agent is compromised, and in addition to its original actions, it now sends an email to an external domain.

Scenarios like this are happening with frequency. 1 in 8 enterprise security incidents now involve an agentic AI system as either the primary target, a contributing vector, or an amplifier of breach impact. But to detect a compromised agent, you often need capabilities that enterprise security stacks don’t have—things like action-level logging, behavioral baselining, and anomaly detection specific to AI systems.

Security and governance tooling built for AI gives you exactly those capabilities: A clear record of what each agent does, and an alert whenever something deviates from its baseline.

5. Do you control who can build and deploy AI agents?

Just 1 in 5 companies have a mature model for governance of autonomous AI agents. Is yours one of them?

A 2026 survey of 900+ executives and technical practitioners found that while 81% of technical teams have moved AI agents into active testing or production, only 14% report all agents go live with full approval from security and/or IT. Agents are getting deployed quickly, but the approval process hasn’t kept up.

Role-based access controls and policy enforcement let you manage access to AI models for building agents. They also help you answer three key questions about every agent in your environment:

- Who built it?

- What can it access?

- Is that access still appropriate?

6. Do you know what your AI is costing you?

The financial outlay for AI is getting tougher to track. Roughly 4 in 5 companies miss their AI cost forecasts by 25% or more, and 84% report “significant gross margin erosion tied to AI workloads.” The projections are often more volatile for companies that use agentic models, which require 5-30 times more tokens per task than a standard GenAI chatbot.

To effectively govern AI costs, you’ll need to forecast and track consumption over time, especially as more vendors shift toward token-based billing.

Quantization reduces the compute overhead of running capable models without sacrificing performance. And because quantized models have more consistent resource consumption, they give your IT teams something they can actually forecast against.

7. Do your employees know how to use AI safely?

According to one study, 80% of U.S. and U.K. workers use AI, but only 44% have received AI training or tools. Another survey found that only half of workers say their employer’s AI policies are “very clear,” and more than half admit to using AI in ways that may violate them.

There isn’t a single product or service that can fully answer this question for you. Gartner® says it directly: “Responsible AI education will become just as important as cybersecurity training in the years to come and should form part of your organization’s mandatory security training.”

The technical controls outlined in questions 1 through 6 create the conditions that make training meaningful: A governed AI environment for employees to safely experiment within.

If you can’t answer ‘yes’ to all seven yet, you’re not alone.

Most security and IT leaders can’t answer all of these just yet. But by knowing where your blind spots are (and having a roadmap to address them), you can start building an environment that keeps your team productive and your enterprise data secure.

Gartner® lays out the infrastructure, roles, and technical controls you need to get there. Download the full report to see the complete framework.

Gartner, How to Mature Generative and Agentic AI Governance for Enterprise Applications, Max Goss, Stephen Emmott, Tristan Iles, 29 October 2025.

GARTNER is a registered trademark of Gartner, Inc. and/or its affiliates.