In March 2024, while running routine benchmarks, Microsoft developer Andres Freund noticed SSH logins were running a half-second slower than they should. He described it as a “weird symptom” and started poking around.

What he found instead was a malicious backdoor that could have compromised nearly every server on the internet. The attack was more than two years in the making, hidden upstream in a widely used compression library. Undetected, it would have given its creators a master key to hundreds of millions of computers. One open-source maintainer dubbed it “the best executed supply chain attack we’ve seen.”

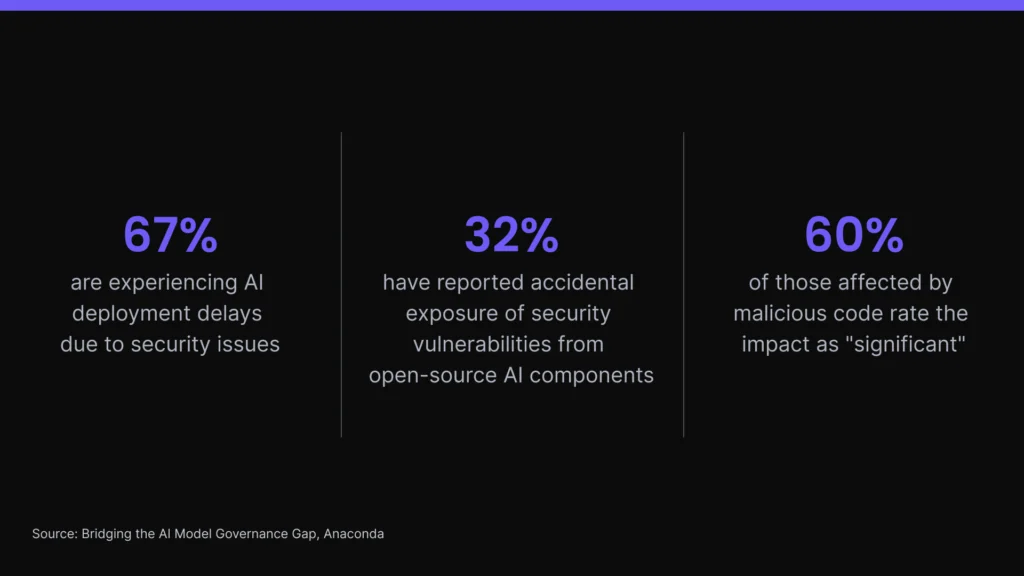

The open source ecosystem that powers enterprise AI—the models, packages, and dependencies that virtually every AI system relies on—carries the same exposure. More than two-thirds of tech professionals are experiencing AI deployment delays due to security issues, and nearly one-third of AI practitioners have reported accidental exposure of security vulnerabilities stemming from their use of open-source AI components. And when malicious code slips through, 60% of those affected rate the impact as “significant.”

AI governance is how organizations close the gap between AI adoption and the controls that need to accompany it. This guide covers what AI governance is, why it’s non-negotiable, and how your organization can implement it at scale.

What is AI Governance?

AI governance is the set of policies, processes, roles, and technical controls that determine how your organization manages AI systems, including agentic systems that take autonomous, multi-step actions on your organization’s behalf. It encompasses AI ethics, risk management, regulatory compliance, security, and accountability in a unified framework.

That definition covers the organizational discipline this guide is built around. However, it’s worth distinguishing from a second use of the term—AI governance as governmental regulation—which refers to the laws and policy frameworks that nations and international bodies apply to AI development and deployment. Both are relevant to building a responsible AI strategy, but they’re different problems. One is something you build, while the other is something you comply with.

ESG analyst Mark Beccue talks AI governance, security, and trust controls for open-source models.

Core principles of AI governance

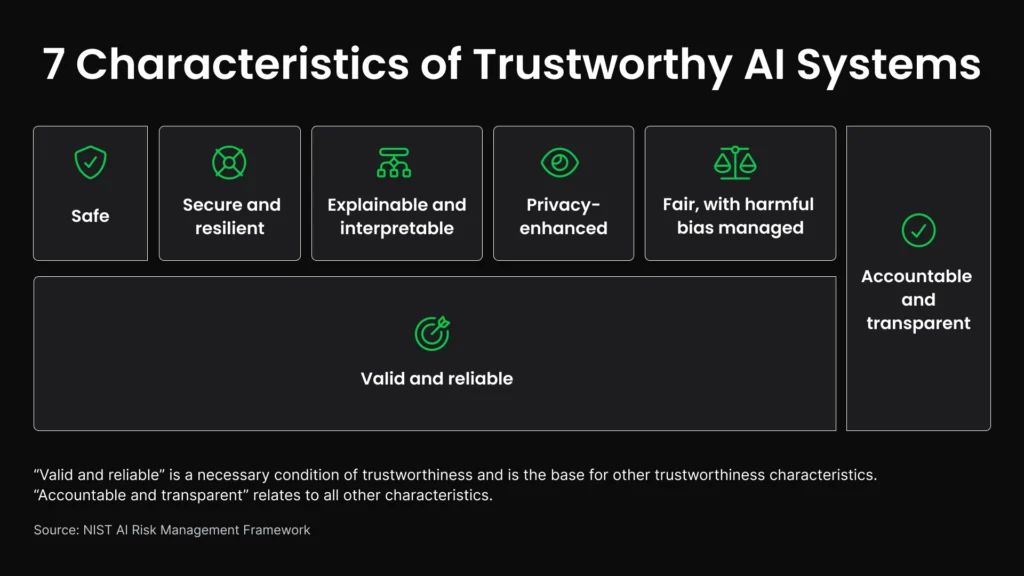

Major AI governance frameworks, which include the NIST AI RMF, the OECD AI Principles, and the EU AI Act, vary considerably in their structure and emphasis. But these six themes appear consistently across each of them, and they define what responsible AI looks like in practice:

1. Transparency and explainability

Ethical AI should produce decisions that can be understood and audited. When a model flags an application or triggers a security alert, the people affected (and the teams responsible) should be able to trace why.

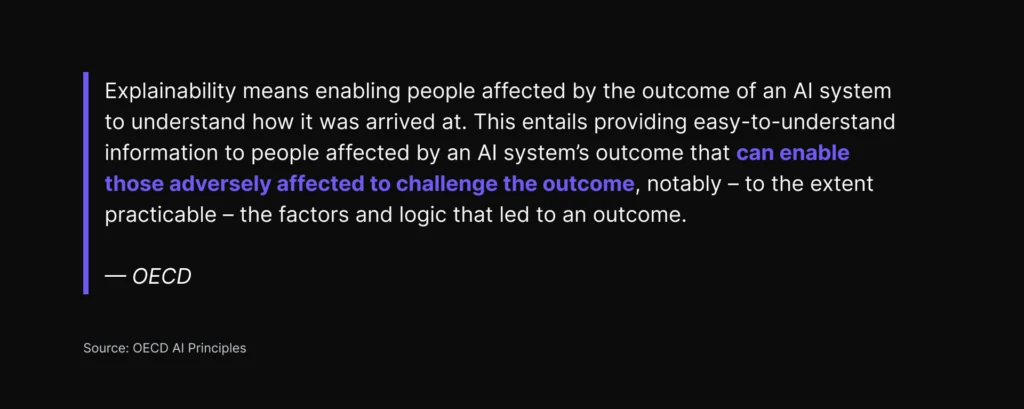

The OECD defines explainability as “enabling people affected by the outcome of an AI system to understand how it was arrived at,” specifically so they can challenge that outcome and the factors that led to it. The EU AI Act goes further, legally obligating organizations deploying high-risk AI systems to document data lineage and decision logic.

2. Accountability

Every AI system should have a clear owner who’s responsible for its performance, outputs, and failures—and for sharing updates with relevant stakeholders.

The U.S. Department of Justice now evaluates corporate AI governance programs based on how they hold employees accountable for AI use, and for whether the company has controls in place to monitor AI’s trustworthiness, reliability, and compliance with the law. NIST’s AI RMF builds accountability into its Govern function by requiring organizations to explicitly document who’s responsible for mapping, measuring, and managing AI risks.

3. Fairness and bias mitigation

AI systems should deliver equitable outcomes across demographic groups, regardless of race, gender, age, or other protected characteristics.

Achieving that requires deliberate work at the data, model, and monitoring layers, since bias in training data is typically invisible until production. That’s what happened in 2018 when Amazon scrapped its AI recruiting tool after it demonstrated a bias against female applicants. And it’s happening today with a recidivism-scoring tool used in U.S. courtrooms, which flags Black defendants as “high risk” at nearly twice the rate of white defendants. But because the tool’s algorithm is a trade secret, the courts still can’t see what’s inside it.

4. Safety and security

Artificial intelligence should behave as intended under normal conditions, and it should predictably degrade under adversarial ones. These are related but distinct problems: Safety governs whether a system behaves reliably and responsibly on its own, while security governs whether it can be exploited or manipulated by others.

NIST identifies both as core characteristics of trustworthy AI, noting that security and resilience encompass both resistance to attack and the ability to recover. For organizations building on open-source components, that means security governance starts at the dependency level: Every package in an AI’s stack is a potential attack surface, and vulnerabilities that enter can go undetected long after a model reaches production.

5. Privacy and data protection

Trustworthy AI systems should handle data privacy and personal data in compliance with applicable regulations. They should also maintain appropriate controls over how training and inference data is stored, accessed, and retained.

GenAI raises particular privacy risks, according to NIST. The datasets used to train AI may include reams of personally identifiable information, such as credit cards, driver’s licenses, passports, and birth certificates. Exposure to this data may cause models to leak or correctly infer sensitive details, often in ways their developers didn’t anticipate.

For organizations deploying retrieval-augmented generation (RAG) systems, the knowledge base itself is a privacy risk surface. Documents retrieved at inference time may contain sensitive information the end user isn’t authorized to see. Fine-tuning on proprietary or regulated data introduces a parallel risk: That information can be extracted from a model through carefully crafted prompts.

6. Human oversight

Consequential AI decisions should remain subject to meaningful human review—especially in industries like healthcare, finance, and law, where small errors can have big consequences.

The EU AI Act makes this a legal requirement: High-risk AI systems must be designed to allow for human oversight. The OECD AI Principles take it a step further, calling for mechanisms that allow humans to override, repair, or decommission AI systems if they cause undue harm or exhibit undesired behavior.

With agentic systems that execute multi-step tasks autonomously, meaningful oversight should be designed into the task structure before execution begins, rather than at individual decision points. Organizations should define what the agent is authorized to do, where it must pause for human approval, and what actions require sign-off before they become irreversible.

What neither framework can mandate, however, is whether oversight is genuine. In Australia, an automated debt recovery system—Robodebt—had human operators responsible for validating its outputs. But many employees processed them without proper review, in part because management prioritized throughput over accuracy. Oversight is only effective when humans have the authority, time, and incentive to actually intervene when appropriate.

These six principles appear across every major governance standard because they reflect the same ethical considerations:

- Can you trust this system?

- Can you demonstrate that trust to others?

Why does AI governance matter?

AI governance is the difference between scaling successfully and stalling out. Reports show that enterprises where senior leadership actively shapes governance structures for AI achieve significantly greater business value than those that delegate it to technical teams alone.

In addition, ungoverned AI applications can leave potential risks unaddressed, leading to serious consequences for your organization and its stakeholders.

The cost of ungoverned AI

By the end of 2026, Forrester projects ungoverned use of generative AI will cost B2B companies more than $10 billion in enterprise value, driven largely by legal settlements and fines.

Those financial losses are already piling up, according to IBM’s 2025 Cost of a Data Breach Report. One in five organizations have experienced a breach linked to shadow AI, and those with “high levels” of shadow AI spent an average of $670,000 more on breach costs compared to those with low or no shadow AI. Among organizations that experienced an AI-related breach, 97% lacked proper AI access controls, and 63% had no AI governance policy at all or were still developing one when the breach occurred.

The business case for getting it right

Governed AI moves faster and costs less to operate. IBM’s data illustrates this in real financial terms: Organizations that use artificial intelligence and automation extensively throughout their security operations reduce their breach lifecycle by 80 days and save nearly $1.9 million in breach costs, on average. Gartner, too, estimates that effective AI governance technologies could reduce regulatory compliance costs by 20%, freeing up resources that organizations can redirect toward new AI initiatives and growth.

Governed AI also helps organizations build trust with their external stakeholders. Gartner expects that by 2028, enterprises using AI governance platforms will achieve 30% higher customer trust ratings and 25% better regulatory compliance scores than their competitors.

What regulations govern the use of AI?

The logical and legal cases for AI governance are converging fast. In 2024 alone, U.S. federal agencies introduced 59 AI regulations—more than double the amount issued the prior year, and by twice as many agencies. And since 2016, legislative mentions of AI across 75 countries have increased ninefold, according to Stanford University’s AI Index Report.

That means the principles introduced earlier in this guide increasingly carry legal weight.

EU AI Act

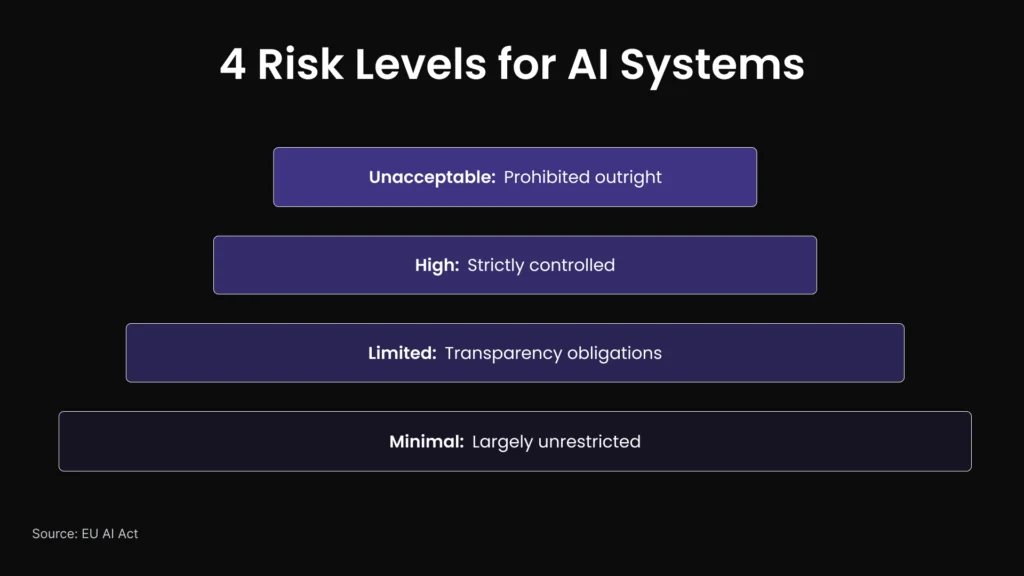

The EU AI Act is the world’s first comprehensive, risk-based AI law. It classifies AI systems into four risk tiers:

- Unacceptable (prohibited outright)

- High (strictly controlled)

- Limited (transparency obligations)

- Minimal (largely unrestricted)

High-risk systems—such as AI used in hiring, credit scoring, healthcare, and critical infrastructure—face strict pre-deployment requirements.

The Act applies extraterritorially, meaning any organization whose AI systems affect people in the EU falls under its jurisdiction, regardless of where the organization is headquartered. That scope makes it the most far-reaching AI law currently in force. Full enforcement begins August 2, 2026, and violations carry fines of up to 7% of annual global revenue—a penalty structure that mirrors the General Data Protection Regulation (GDPR), which set the same precedent for data protection.

That parallel should be taken seriously. When GDPR first took effect in 2018, many organizations treated it as a European compliance problem. But within a few years, it became the de facto global standard for data protection. The EU AI Act is on the same trajectory.

OECD AI Principles

The OECD AI Principles, adopted in 2019 and updated in 2024, are the first intergovernmental standard for AI. They’ve been adopted by more than 40 countries and form the philosophical backbone of several national AI strategies, including the U.S. Executive Order on AI. For organizations operating across multiple jurisdictions, the OECD Principles offer a single, globally applicable set of commitments that holds across major regulatory frameworks without requiring country-by-country adaptation.

Foundational data protection laws

GDPR, HIPAA, CCPA, and SOC 2 all predate AI-specific regulation. However, they still form the compliance floor for any AI system that processes personal data. Two of these frameworks carry particular implications for AI governance.

GDPR

GDPR governs the collection, processing, and storage of personal data for anyone operating in or serving individuals in the European Union. For AI systems, this creates specific obligations that go beyond general data hygiene. GDPR’s data minimization principle limits how much personal data an AI system can ingest during training or inference. Its purpose limitation principle restricts organizations from using data collected for one purpose to train AI models serving a different one. And its right to explanation (codified in Article 22) gives individuals the right to contest automated decision-making processes that significantly affect them. That means high-stakes AI systems need auditable decision logic by design.

HIPAA

The Health Insurance Portability and Accountability Act governs protected health information (PHI) in the United States. For artificial intelligence in clinical settings, HIPAA creates obligations at every stage of the model lifecycle, including:

- Training data must be de-identified or subject to a business associate agreement (BAA)

- Inference outputs that involve PHI require access controls and audit logging

- A third-party vendor processing PHI on behalf of a covered entity must sign a BAA

Any AI tool that ingests clinical notes, imaging data, or patient records (even for seemingly benign purposes like scheduling optimization) falls within HIPAA’s scope.

Remember, regulations lag technology by design. That’s why building a responsible AI governance program that addresses evolving AI regulations (rather than just their current letter) is the most durable strategy for the long term.

What are the most important AI governance frameworks?

Regulations are external requirements imposed by governments and regulators, and they define what organizations must comply with. Frameworks define how to actually operationalize governance internally.

Most mature AI programs use both. These frameworks are the most relevant and widely adopted today.

NIST AI Risk Management Framework

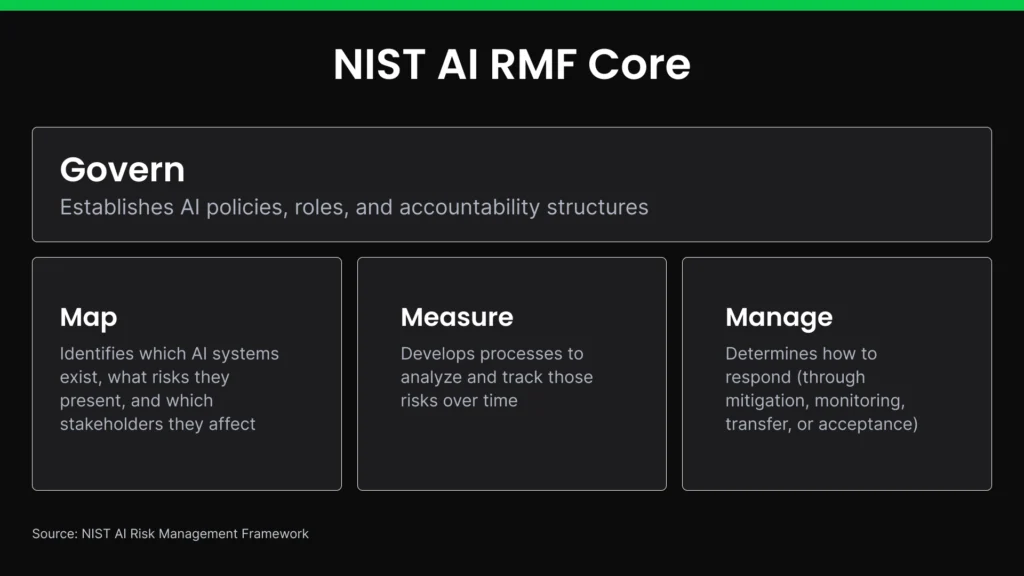

The NIST AI RMF is a voluntary, sector-agnostic framework that functions as the de facto standard in many sectors—particularly financial services, defense contracting, and healthcare, where it’s embedded in procurement requirements and audit expectations. It’s organized around a cross-cutting Govern function, which establishes AI policies, roles, and accountability structures across the organization, and three operational functions (Map, Measure, Manage) that apply those principles to specific AI systems:

- Map: Identifies which AI systems exist, what risks they present, and which stakeholders they affect.

- Measure: Develops processes to analyze and track those risks over time.

- Manage: Determines how to respond (through mitigation, monitoring, transfer, or acceptance).

In 2025, NIST released updated guidance expanding the framework to address generative AI specifically with new provisions on model provenance, training data transparency, and AI supply chain risk. A companion resource, the December 2025 Cyber AI Profile, addresses AI security risks in depth—including specific guidance on agentic AI systems that covers the risks that arise when single agents or multi-agent systems take autonomous actions across tools, data sources, and external environments. It’s particularly relevant to organizations building on open-source components.

ISO/IEC 42001

ISO/IEC 42001 defines requirements for a certifiable management system that covers the full AI lifecycle, including policy documentation, organizational roles, performance evaluation, and continual improvement processes.

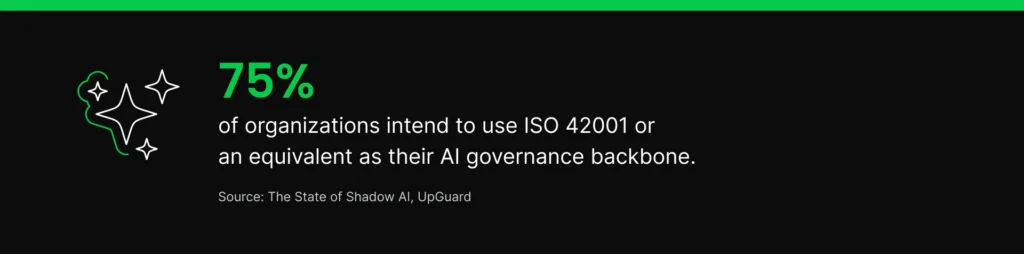

Third-party ISO 42001 certification is gaining traction in enterprise procurement. A recent benchmark of 1,000 compliance professionals found that 76% of organizations intend to use ISO 42001 or an equivalent as their AI governance backbone, and enterprise RFPs increasingly ask for AI governance proof rather than self-attested policy commitments.

Building an internal governance framework

The NIST and ISO/IEC frameworks are designed to work together. Many organizations use NIST AI RMF as a conceptual foundation, then operationalize it through ISO 42001 for measurable governance, accountability, and audit readiness. NIST itself publishes a formal crosswalk mapping its AI RMF functions to ISO 42001 controls.

Together, these frameworks point to four organizational elements that any internal governance program needs to establish:

- A cross-functional AI governance committee representing legal, security, engineering, and business leadership. This committee should own the governance program and resolve disagreements about risk tolerance.

- A risk classification system that assigns a tier to every AI system, aligned to EU AI Act categories, based on the consequences of failure.

- Model documentation standards that establish what must be recorded for each AI system, including intended use, training data lineage, ethical guidelines, known limitations, and review cadence.

- Audit processes that determine how and how often governance controls are verified, and who is accountable when gaps are found.

- Adversarial testing and red-team processes that deliberately probe AI systems for failure modes, bias, and exploitable behaviors before deployment and regularly in production. NIST’s generative AI guidance specifically calls for red-teaming as a risk measurement practice.

How do you implement AI governance?

Governance frameworks tell you what to do, but they don’t tell you how.

These six steps move in sequence, with each one building upon the last. If you’re just getting started with AI governance, you can follow them in order. If you’re further along, you can read through this section to identify what’s missing and determine what to prioritize next.

1. Start with a risk assessment and AI inventory

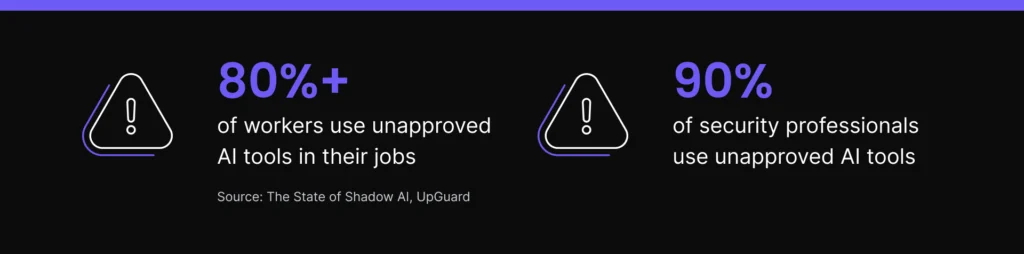

Before writing any governance policies, you need to know what you’re actually governing. Research shows more than 80% of workers use unapproved AI tools in their jobs, including nearly 90% of security professionals. If you only build AI policies around the systems and tools your IT team approves, you’ll end up governing a sliver of your organization’s actual tech stack.

NIST AI RMF’s Map function gives you the structure to capture a complete picture. It calls for conducting an AI system inventory that documents each system’s intended purpose, data sources, incident response plans, and the names and contact information for relevant AI actors responsible for it. In doing so, you’ll establish what NIST describes as a “holistic view” of your organization’s AI assets.

The inventory step also calls for classifying systems based on criticality, use cases, and potential for harm so you can quickly determine which ones need the most rigorous assessment. And for agentic AI tools, the inventory step should document what actions each tool is authorized to take autonomously, what systems it can access, and what human approval gates exist before consequential actions are executed.

Anaconda cofounder and Chief AI and Innovation Officer Peter Wang put it this way in Fast Company: “Before rushing to deploy the latest model, answer this: What AI technology is mature enough to be useful for us right now, and which parts of the business are ready for it?” Risk classification helps you answer that question across every AI system in your organization (even the ones IT never approved).

2. Govern your data before you govern your AI models

Ungoverned data produces ungoverned AI, regardless of what policies sit above it. In fact, Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data.

Getting your data AI-ready requires four things:

- Data quality processes that verify your training data is accurate, complete, and representative. If there’s bias at the data layer, it will eventually reemerge as expected model behavior, rather than drift or an error.

- Provenance tracking that details where data originated, how it was processed, and whether it meets your organization’s standards for use.

- Bias detection methods (such as data reweighing, feature masking, and balanced sampling) to identify skewed distributions before they enter production.

- Data poisoning prevention methods (such as checksums, hashes, digital signatures, and lineage tracking) applied to external data before it enters internal systems.

- RAG knowledge base governance, including version control for retrieved documents, access control policies, and freshness monitoring to flag stale content before it influences model outputs.

3. Secure your AI supply chain

Virtually every AI tool depends on packages and models from the open-source ecosystem, which means they inherently carry some risk. But unlike traditional software vulnerabilities, compromised AI supply chain components can alter model behavior in ways that evade standard testing.

According to Anaconda’s State of Enterprise Open-Source AI survey, 29% of practitioners say security risks are the most important challenge associated with using open-source components in AI and machine learning projects. Their concern is well-founded, too: 32% of respondents reported accidental exposure of security vulnerabilities from their use of open-source AI components, with half of those incidents rated “very” or “extremely” significant.

Fortunately, the steps you’re already taking to secure your software supply chain can be applied directly to AI artifacts:

- Automate vulnerability scanning to detect known CVEs in open-source dependencies before they reach production. (61% of respondents to Anaconda’s survey say they’re already doing this as a standard practice.)

- Generate AI bills of materials (AIBOMs) so you can maintain an auditable record of every component in each AI system in production, including packages, models, and their versions.

- Track dependencies to better understand the packages your packages depend on, which is where supply chain attacks most often enter.

- Review licenses to confirm all open-source components meet your organization’s legal requirements, since license violations can carry the same financial risk as security vulnerabilities.

- Defend against prompt injection by implementing input validation, output filtering, and principle-of-least privilege tool permissions for LLM-based applications.

When evaluating third-party AI agent tools, look for independent certification. AIUC-1, developed with contributors including MITRE, MIT, and Stanford, is one of the first standards built specifically for AI agent security and reliability.

4. Define roles and accountability

A 2025 review of documented AI failures found that the most consequential incidents were caused by organizational failings—things like weak controls and unclear ownership—rather than technical shortcomings.

NIST AI RMF addresses this problem directly in its Govern function. GOVERN 2.1 says all AI risk roles and decision-making authority must be clearly documented and understood across the organization. That means every AI system needs a named owner who’s accountable for its outputs.

Implement a RACI framework to make those assignments explicit. Define who’s responsible for day-to-day operation and maintenance, who’s accountable for outcomes and risk decisions, who should be consulted before significant changes (typically legal, compliance, and security), and who should be informed when issues arise. Every AI tool should have a completed RACI before it ships, and that RACI should be reviewed whenever the tool’s data inputs change.

5. Monitor your AI systems and tools

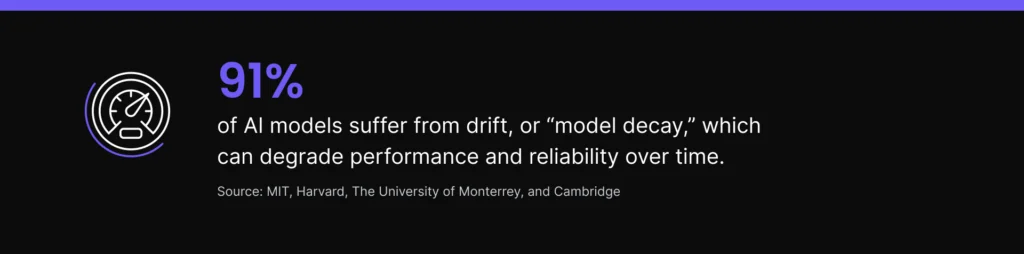

AI models don’t stay accurate on their own. Studies show 91% of them suffer from drift, or “model decay,” which can degrade performance and reliability over time. That means a model you deploy today may behave very differently a year from now.

AI governance frameworks like NIST AI RMF address this by advocating for monitoring tools that support continuous assessment, rather than periodic reviews. NIST’s 2025 updates specifically require organizations to implement real-time continuous monitoring and anomaly detection, expand controls to cover emerging threats, and ensure audit trail transparency.

Audit logging is the other piece of the puzzle. ISO 42001 Annex A Control A.6.2.8 requires event log recording at every significant phase of an AI’s lifecycle, from pilot through production and eventual retirement.

To validate that your monitoring program addresses real-world risks, ask yourself:

- Do we track model performance against baseline metrics?

- Do we monitor drift detection on input data and model outputs?

- Do we run vulnerability scans for newly disclosed CVEs in dependencies?

- Do we have incident response processes that activate when thresholds are exceeded?

- Do we monitor LLM outputs for hallucination rates, toxicity, and leakage?

- Do we have a red team, and/or do we perform adversarial testing at regular intervals?

6. Match your AI governance to your maturity level

AI maturity exists on a spectrum, but most enterprises are stuck at the lower end. A recent Gartner study found that although 80% of large organizations claim to have AI governance initiatives in place, fewer than half can demonstrate measurable maturity.

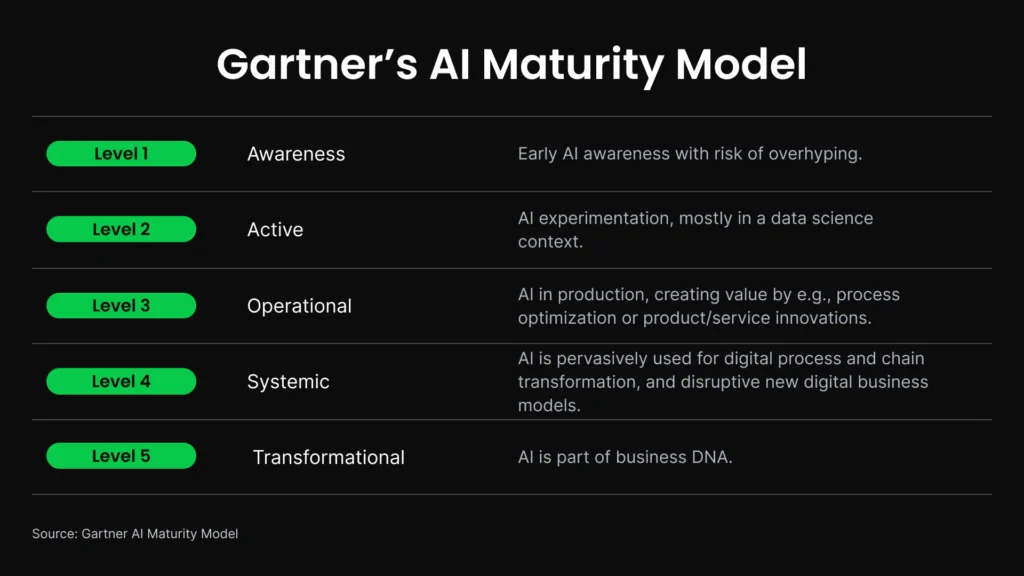

Gartner’s “AI Maturity Model” describes five progressive levels of AI governance maturity (Awareness, Active, Operational, Systemic, and Transformational). Moving from one level to the next demands intentionality: You need to focus on one priority at a time. Here’s what that looks like:

Awareness

At this level, conversations about artificial intelligence are happening, but not in a strategic way. No pilot projects or experiments are taking place.

Your top priority is to establish the foundation. Complete an AI inventory, identify the shadow AI in use across your organization, and document or assign ownership for every AI tool you find.

Active

At this level, artificial intelligence is starting to appear in proofs of concept and/or pilot projects. Meetings are focused on knowledge sharing and how to standardize tools and workflows.

Your top priority is to formalize risk classification. Stand up a cross-functional governance committee to review your isolated AI pilots before they reach production.

Operational

At this level, at least one AI project has moved to production. Best practices, experts, and technology are accessible across the enterprise. AI has an executive sponsor and a dedicated budget.

Your top priority is implementing continuous monitoring, audit logging, and supply chain controls. These form the operational infrastructure that will keep your production AI reliable over time.

Systemic

At this level, every new digital project at least considers AI-driven solutions, and new products and services have embedded AI. AI-powered applications interact productively within the organization and across the business ecosystem.

Your top priority is governance automation. Policy enforcement should be happening at runtime, and compliance evidence should be continuously generated. And if you’re deploying agentic AI systems at this level, governance automation should also include runtime authorization controls that enforce what actions an agent can take.

Transformational

At this level, AI is deeply embedded in your organization’s DNA. It continuously drives advancements in innovation, new business models, and strategic cultural transformation.

Your top priority is sustaining what you’ve built. Nearly 60% of high-maturity organizations have centralized their AI strategy, governance, data, and infrastructure capabilities, which keeps their governance effective as AI scope and complexity expand.

The organizations that do this well see measurable returns. High-maturity organizations keep their AI projects operational for three years or more at more than twice the rate of low-maturity organizations (45% versus 20%). In addition, organizations that deploy dedicated AI governance platforms are 3.4x more likely to achieve high effectiveness in AI governance than those that do not.

What makes open source AI governance different?

Open-source AI is a catch-22 for enterprise governance: It’s both the biggest driver of AI innovation and the hardest to actually govern.

According to IBM research, 51% of businesses using open-source tools report positive ROI compared to just 41% of those that don’t. Anaconda’s own survey data puts the efficiency gains at 57%, with estimated savings of 28% in cost and 29% in time. Yet the very nature of open source means that without consistent AI practices, no single vendor is accountable for what enters your stack. A compromised open-source component, for example, can influence model behavior in ways that evade standard testing.

That tradeoff shapes how you should think about when to use open source at all. If you’re using AI as a supporting capability (e.g., automating meeting notes, summarizing internal documents, powering a support chatbot), a commercial solution with managed governance may be the right move. When AI is central to competitive differentiation, as it is for organizations building proprietary models trained on private data, open source is often the only path that delivers true control and customization. It lets you retain control over your AI stack, stabilize long-term costs, and preserve your organization’s autonomy.

But the governance rigor has to match that level of commitment.

How does the Anaconda Platform support AI governance?

The Anaconda Platform is the secure Python and AI foundation trusted by more than 50 million users worldwide and used across 95% of Fortune 100 companies. It’s specifically built around the governance challenges we’ve explored thus far, and it addresses them in two distinct but related ways:

Anaconda Core is the secure foundation for open-source package management. It provides:

- Access to thousands of vetted Python packages, with comprehensive vulnerability scanning for all packages and their dependencies

- Automated security policies that enforce your organization’s unique security requirements

- Detailed audit trails that support compliance with GDPR, HIPAA, and CCPA

In addition, Anaconda Core has license filtering built in, which ensures every open-source component meets your organization’s legal requirements before it enters a workflow.

Anaconda AI Catalyst extends AI governance to the model layer—the part of the AI supply chain that most enterprise platforms leave ungoverned. It provides:

- Pre-validated, quantized models with built-in governance controls

- AI Bills of Materials (AIBOMs) for every model in production

- Versioned and reusable model artifacts

- An inference service for deploying and serving models

- Policy controls and audit trails across AI assets

Together, Anaconda Core and AI Catalyst provide safeguards to address the full spectrum of enterprise AI governance needs: data governance, supply chain security, audit logging, role-based access controls, and compliance documentation. It also applies them specifically to the open-source AI environment where the risk is highest and vendor accountability is lowest.

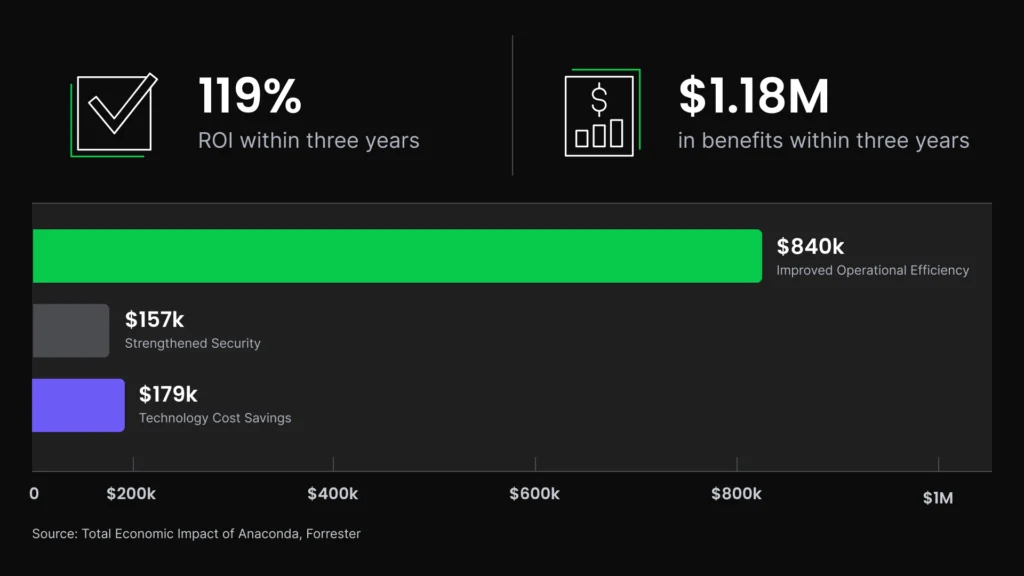

In a Total Economic Impact study commissioned by Anaconda and conducted by Forrester Consulting, a composite organization representative of interviewed customers saw a 119% ROI and $1.18M in benefits within three years. They also saw an 80% improvement in operational efficiency worth $840,000, an 80% reduction in time spent on package security management, and a 60% reduction in security breach risk from addressable attacks.

Frequently asked questions about AI governance

What is the difference between AI governance and data governance?

Data governance manages your data assets—the collection, storage, and data privacy controls that form the raw materials for AI. AI governance oversees the finished product and ensures models meet ethical standards for fairness and performance.

In practice, data governance sets the policies for how data is collected, stored, and accessed. AI governance determines what happens when that data is used to build and operate AI systems.

Which AI governance framework should my organization start with?

If your organization is moving fast with AI and needs immediate risk guardrails, we recommend starting with the NIST AI RMF. It provides lightweight risk guidance and can scale into ISO 42001 controls later. If you’re already ISO 27001 certified, ISO 42001 is a natural extension with significant control overlap.

Most mature organizations end up using both, with NIST AI RMF serving as the operational risk framework and ISO 42001 as the certifiable management system to demonstrate governance accountability to external parties.

Note: Organizations in regulated industries (e.g., financial services, healthcare, and defense contracting) should first check whether sector-specific guidance applies before selecting a primary framework. These often sit alongside NIST, rather than replacing it.

How does the EU AI Act affect organizations outside Europe?

The EU AI Act applies extraterritorially. That means any organization whose AI systems affect people in the EU falls under its jurisdiction, regardless of where that organization is headquartered. This includes, but is not limited to:

- U.S.-based companies with European customers

- Global SaaS providers

- Any enterprise whose AI outputs are accessible in EU member states

Prohibitions took effect February 2025, and rules for general-purpose AI models apply from August 2025 onward. Full enforcement of the Act’s requirements for high-risk AI systems (those used in hiring, credit scoring, healthcare, and critical infrastructure) begins Aug. 2, 2026.

What role does open-source software play in AI governance risk?

Open-source software is typically maintained by distributed communities, with no single vendor responsible for security. That means organizations must own that responsibility themselves through vulnerability scanning, dependency tracking, license compliance review, and AIBOM generation.

This means organizations are responsible for managing their own risk profile. According to Anaconda’s State of Enterprise Open-Source AI survey, 29% of practitioners identify security risks as the most important challenge with open-source AI components, and 32% have already experienced a vulnerability exposure incident from those components.

How can small teams implement AI governance without a dedicated compliance function?

We recommend starting with the highest-leverage activities, rather than trying to implement a complete framework at once. Complete an AI inventory to understand what’s in use, assign an owner to each system, and implement automated vulnerability scanning for open-source dependencies. In addition, NIST AI RMF’s 2025 updates call for continuous monitoring and anomaly detection, both of which can be operationalized through tooling rather than headcount.

The NIST AI RMF is free, publicly available, and designed to scale. Small teams can begin with their self-assessment worksheets and build from there.

Why is an AI Bill of Materials (AIBOM) important?

An AIBOM is an auditable inventory of every component in an AI system. It includes information about the model, its training and inference data sources, dependencies, licenses, known vulnerabilities, and evaluations.

It serves two key functions:

- It gives security teams visibility into the attack surface of every deployed model

- It gives compliance teams the documentation that NIST AI RMF, ISO 42001, and the EU AI Act all require for high-risk systems

Without an AIBOM, organizations can’t answer basic governance questions, such as what models are running, what they’re built on, or whether a newly disclosed CVE affects a system in production.

Anaconda also offers Python, data science, and AI/ML certifications, included in our Business plan.

How does AI governance change for agentic AI systems?

Agentic AI systems—those that execute multi-step tasks, call external tools, and take actions autonomously—require governance controls that standard frameworks aren’t built to handle. Risk assessment must account for the full action space a system can operate in, since a single agent’s decision can cascade in ways that are difficult to anticipate.

Human oversight needs to be designed into the authorization layer before an action chain executes, rather than applied after outputs are produced. In addition, audit logging must go beyond final responses to capture tool invocation requests, inter-agent communication, and data access attempts.

NIST is developing formal guidance on securing AI agent systems. But organizations deploying agents now should treat risk scope, authorization design, and audit logging as the baseline.