Home Professional Services

Professional Services

A force multiplier for your AI journey.

Let our experts help you solve your toughest AI, data science, and machine learning challenges for faster development, fewer hiccups, and immediate impact.

Working with Professional Services

Our experts will work with your team to optimize performance and create a lasting AI foundation.

A Force Multiplier

Reach your destination faster and avoid technical debt by avoiding common obstacles.

Unmatched Expertise

Our experienced team of scientists, engineers, and software developers specialize in open-source Python for machine learning, data analysis, and more.

Built for Your Needs

Whatever your use case, we can help you succeed, whether that’s optimizing code, building AI-powered workflows, or anything in between.

Services Available

Our professional services team will help your in-house experts achieve your business goals using Python.

Professional Services Support Hours

Get Professional Services support for expert help with debugging, packaging, and optimizing conda for your organization.

Kickstart

Targeted Bundles of Professional Services hours that help you migrate, adopt new tools, and build open-source solutions fast.

Customer Consulting

Need more than Kickstarts? Let’s discuss custom projects and deep integrations to meet your goals.

Kickstart Services

Quickly roll out a new data initiative or fast-track an existing one. We offer pre-packaged short engagements (typically 100 hours) at a fixed price. The goal is to get your team using the Anaconda Platform for immediate impact.

Environment Management

Standardize and distribute Python/R packages across your infrastructure while ensuring compliance with organizational policies.

Generative AI

Implement custom, private GenAI solutions using your data, building on open-source libraries and local LLMs.

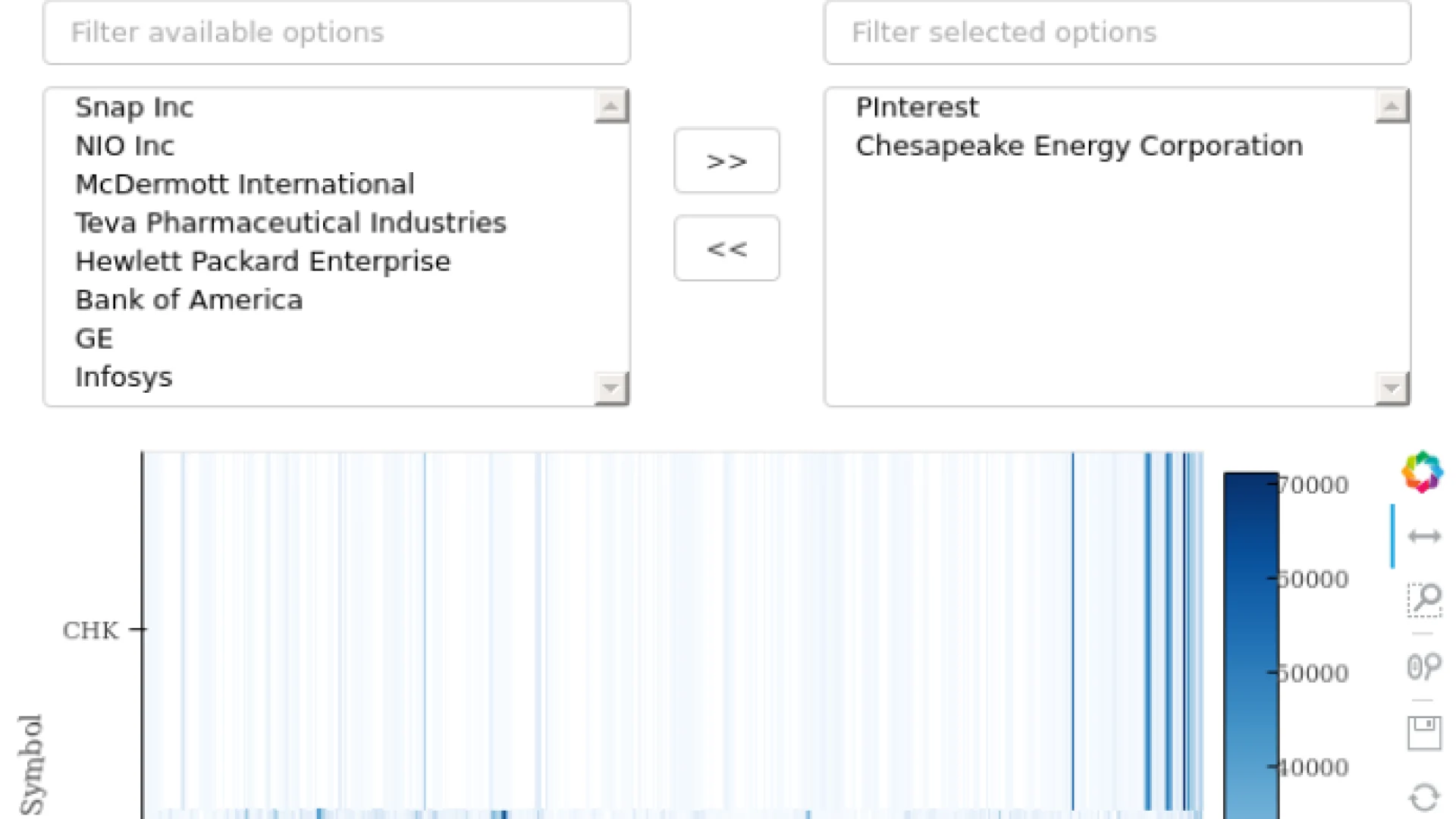

Visualization

Expert guidance to transform complex data into interactive visualizations using Python tools.

Web Applications

Transform analyses into Python-backed interactive web apps that colleagues can explore and apply to their own work.

JupyterHub

Deploy centrally managed and governed Jupyter notebooks on Kubernetes/OpenShift or single machines on your own hardware to keep your data secure.

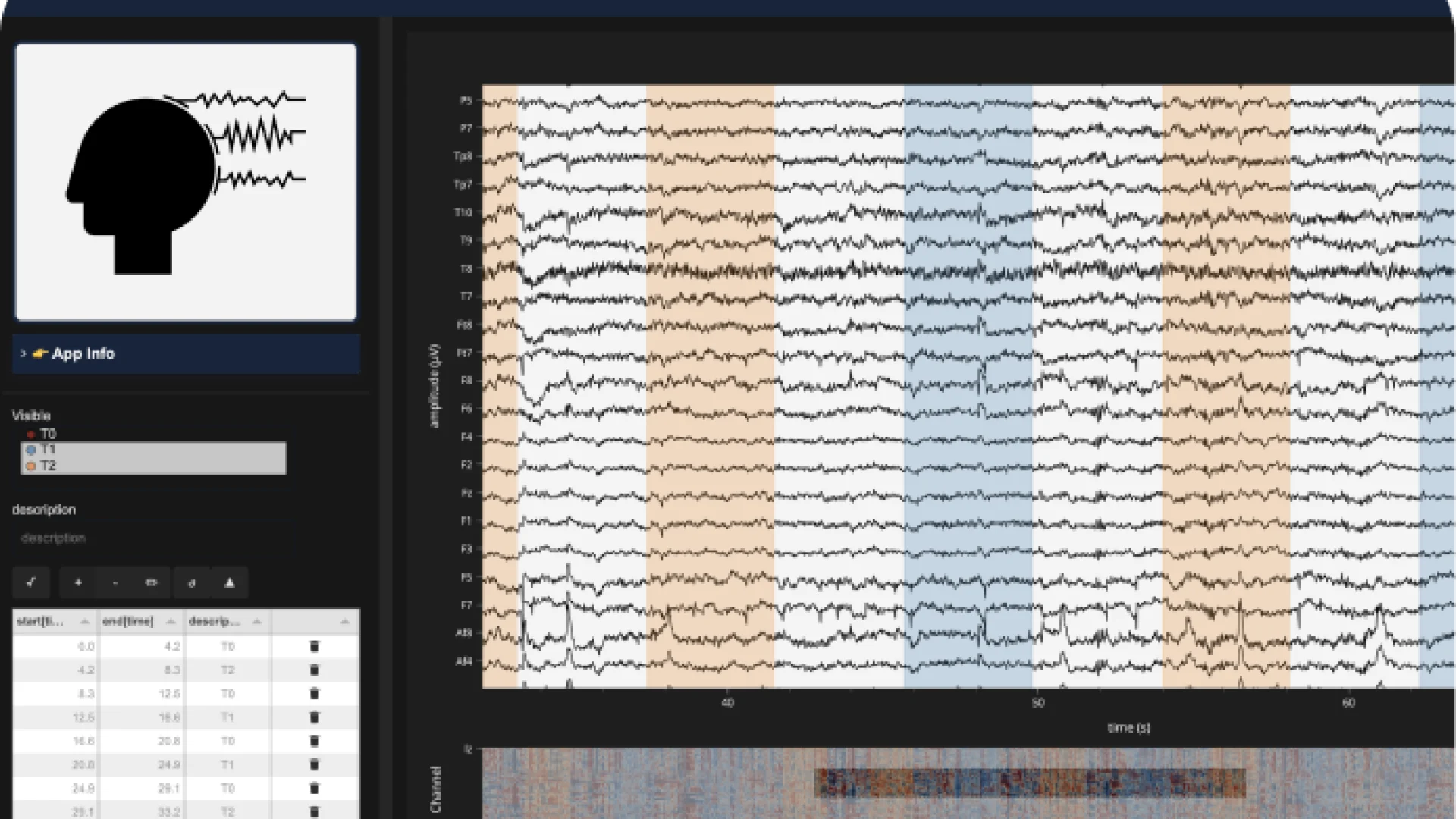

Data Annotation

Build custom annotation tools for time series, images, and plots to capture human insights for AI/ML using open-source tools.

Open Source Software (OSS)

Get expert guidance on applying or optimizing popular open-source libraries like Pandas, NumPy, and Dask for your specific needs.

Python Performance Optimization

Optimize Python code using vector processing, Numba compilation, multi-processing, and low-level programming when needed.

Python Migration

Convert SAS, Spark, Matlab, or R workflows to high-performance Python while learning best practices.

Air-Gapped Environments

Create secure, controlled software update processes for systems without internet access.

Snowflake® Databases

Set up Python ETL processes, UDFs, and deployments in Snowflake’s cloud platform using Snowpark.

Workflow Integration

Connect the Anaconda Platform to internal systems using open-source libraries for scalable automation.

Example Kickstart Projects

FUNDED BY A

Chan-Zuckerberg Initiative

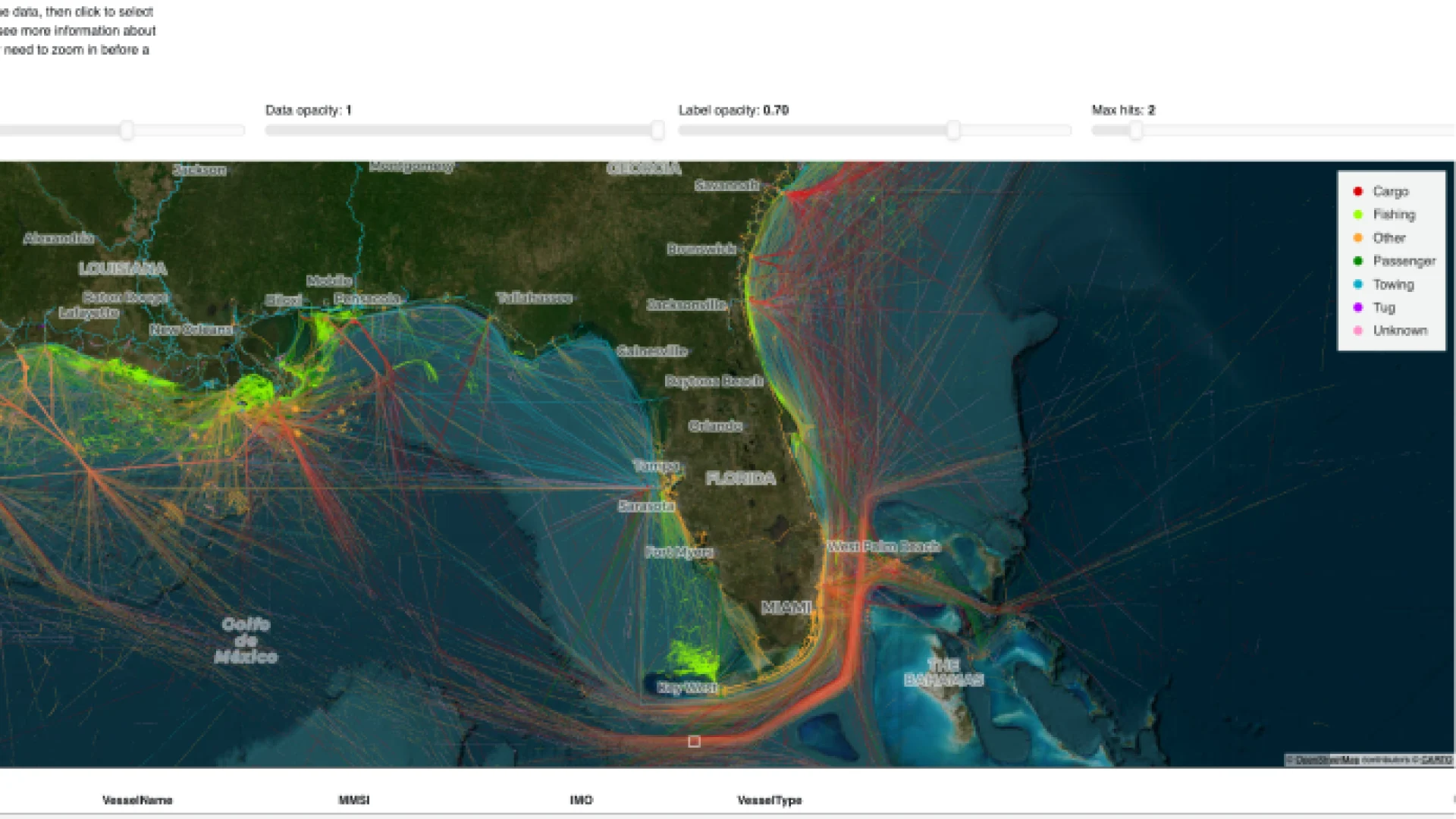

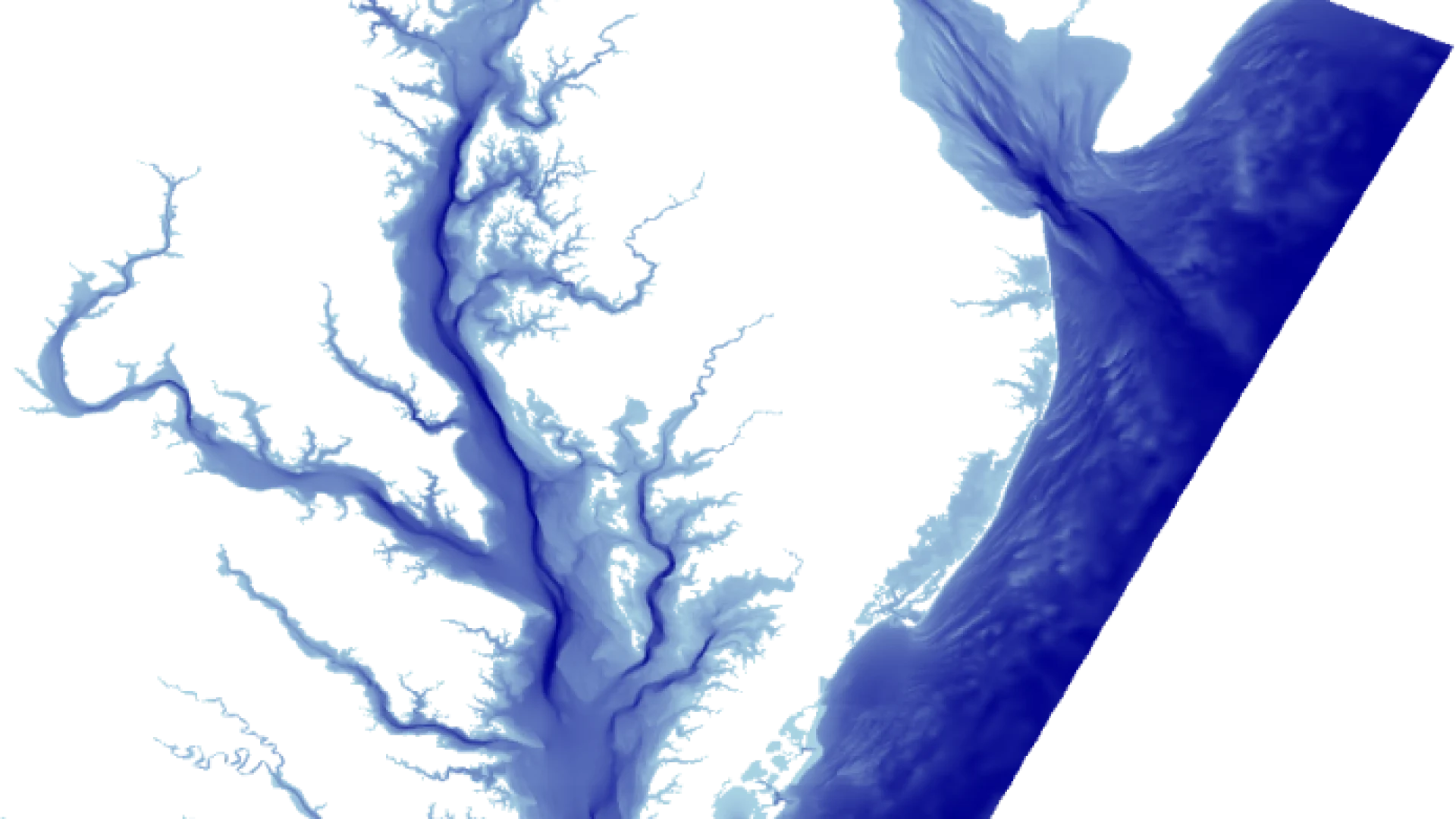

Neuroscience data analysis and visualization

FUNDED BY A

Civil Engineering Organization

Civil Engineering Organization

FUNDED BY

NASA

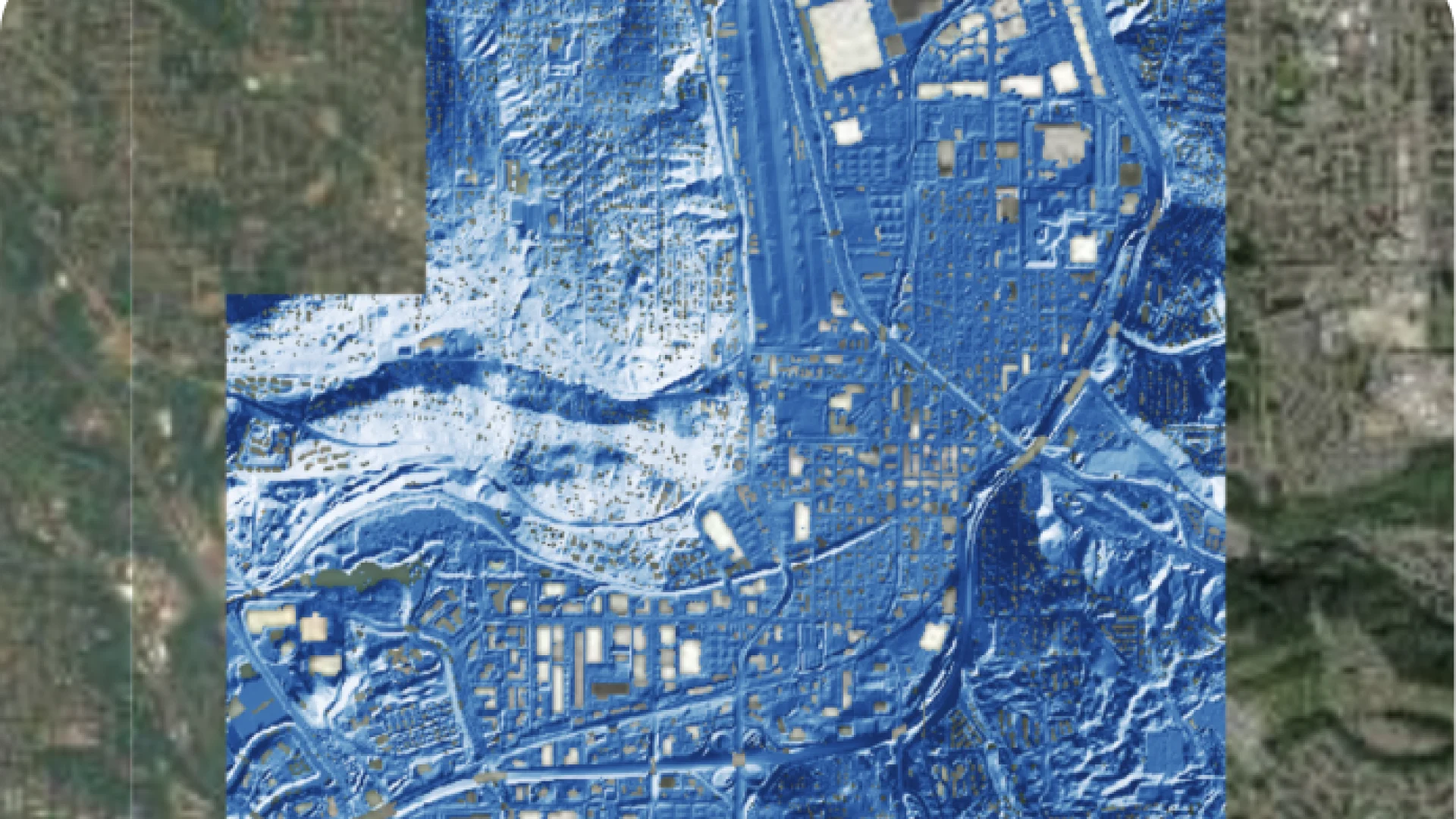

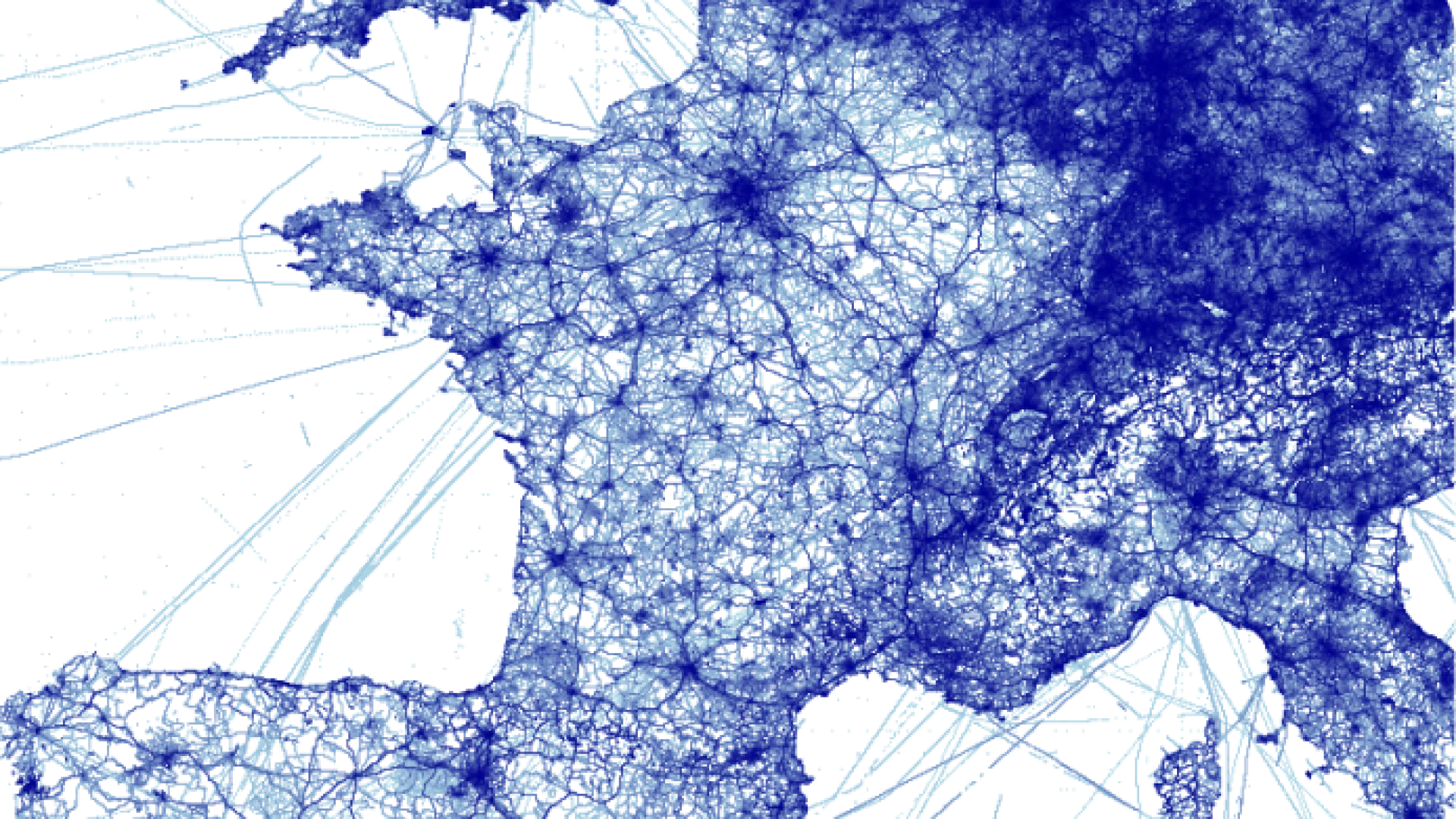

Visualization and ML tools for geographic data

FUNDED BY

DARPA/In-Q-Tel

Tools for rendering very large datasets