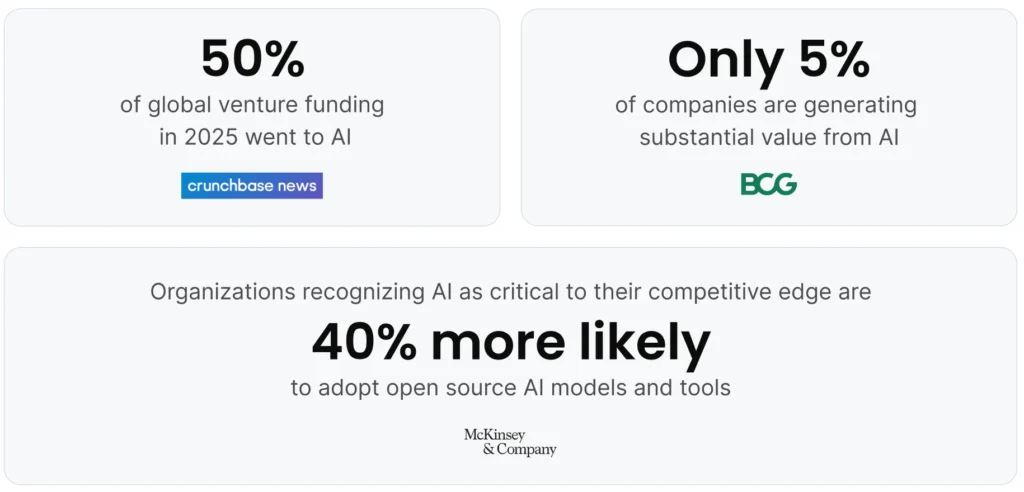

Nearly 50% of all global venture funding went to artificial intelligence in 2025, up from 34% in 2024. Total sector investment eclipsed $202 billion, a 75% increase over the prior year. That level of sustained capital concentration could define which companies lead the next decade.

The business case driving that capital is real. Across time-tested use cases like fraud detection, personalized recommendations, and supply chain optimization, AI has delivered outsized results, often powering new product capabilities and lower costs. Modern generative AI models have only raised the ceiling on what’s possible, giving leadership teams even more reason to move fast.

But speed doesn’t always equal success. A Boston Consulting Group (BCG) study found that only 5% of companies are generating substantial value from AI. BCG calls these “future-built” organizations—companies that have moved beyond using AI for basic automation. Instead, they’re using AI to fundamentally change how their business operates, and they’re outpacing their competitors in both revenue growth and profitability.

Open Source Plays a Key Role in AI

One pattern separates the winners from the stragglers: They’re embracing open source. McKinsey research found that organizations that view AI as critical to their competitive advantage are over 40% more likely to adopt open source AI models and open source tools. More than half of organizations report using open source AI alongside proprietary tools, and those that place a high priority on AI are most likely to use open source technologies.

Indeed, open source AI platforms and packages offer powerful capabilities without the hefty licensing fees of proprietary solutions. Making use of these capabilities typically requires skilled people who understand how to choose the tools your organization will use and how to integrate them into your technology stack.

This guide explores the open source AI ecosystem, including the platforms where you do your work and the essential open source packages and libraries that power AI development. We’ll cover what each offers, how they work together, and how to choose the right combination for your organization’s needs.

ESG analyst Mark Beccue talks AI governance, security, and trust controls for open-source models.

What Is an Open Source AI Platform?

If you search for “open source AI platform,” you’ll find the term used to describe very different things. Technically, a “platform” implies a hosted service or integrated environment where users do their work—a destination you visit or a unified workspace you operate within. By this definition, an open source AI platform is an environment that brings together the tools, infrastructure, and workflows needed for AI development.

However, true platforms typically have operational costs (servers, bandwidth, maintenance) that developers must recoup. That’s why they’re usually proprietary, even when they host or integrate open source technologies. By contrast, open source is common for tools and libraries—software you download and run yourself—because users don’t incur ongoing costs to the developers. This is why most open source AI technologies are packages and frameworks, rather than hosted platforms.

In practice, when people search for “open source AI platforms,” they’re looking for two distinct things: the environments where they work and the foundational packages those environments integrate. This guide covers both:

➤ Open source AI platforms (like Anaconda, Hugging Face, and OpenAI) provide integrated environments, model repositories, or hosted services for AI development.

➤ Open source packages and libraries (like PyTorch, TensorFlow, Keras, and OpenCV) provide the core functionality for building AI models and can be integrated within platforms.

Why Is Open Source Useful for Developing AI?

While highly capable open source AI tools like Theano and scikit-learn have been around since the mid-2000s, the modern boom in open source software started in 2015 with TensorFlow’s release, followed soon after by PyTorch.

These powerful, accessible tools accelerated innovation in software development for data science and AI. As a result, open source AI platforms (and the packages they integrate) have become the foundation of modern machine learning development for several compelling reasons:

- Cost effectiveness: Using open source reduces licensing fees. Organizations can access enterprise-grade AI capabilities without the substantial financial commitment required by commercial alternatives. This makes AI development accessible to startups, research institutions, and enterprises alike. While open-source AI tools have no pricing for the core software, organizations still need to budget for compute resources, whether running on-premises or through cloud providers like Amazon Web Services (AWS).

- Flexibility and customization: Open source AI platforms give your team complete control over how the technology works. Need to modify an algorithm for your specific use case? You can. Want to optimize performance for your unique infrastructure? The source code is yours to adjust. This flexibility extends to fine-tuning pre-trained models for domain-specific applications without vendor restrictions.

- Community support and innovation: Major open source AI platforms benefit from the contributions of thousands of developers worldwide. These communities accelerate innovation, identify bugs faster, and create extensive documentation and tutorials that speed up your team’s learning curve. Platforms like Hugging Face have built thriving communities around open source AI models, making state-of-the-art capabilities accessible to everyone.

- Data privacy: When you use proprietary LLM services, your data typically passes through the vendor’s servers, raising important concerns about confidentiality and control. With self-hosted open source AI platforms and tools, your data stays within your infrastructure (whether on-premises or in your own cloud environment), giving you full control over sensitive information.

- Versatility across use cases: Organizations can use open source AI platforms for a wide range of applications, such as computer vision projects, natural language processing, predictive analytics, recommendation engines, fraud detection, autonomous systems, and conversational AI apps. The resulting systems can be fully embedded into operational workflows without any required tie to a proprietary tool that might limit its use.

The 14 Top Open Source AI Platforms and Essential Packages

Open source AI platforms come in very different forms. Some provide integrated environments for managing the entire AI development lifecycle (Anaconda). Others serve as central hubs for discovering and deploying pre-trained models (Hugging Face). Still others provide APIs and tools for accessing cutting-edge AI capabilities (OpenAI).

These platforms integrate essential open source packages and libraries that provide the core functionality for AI development. PyTorch and TensorFlow serve as the foundational deep learning frameworks. OpenCV powers computer vision applications.

Understanding the platforms and the packages they integrate helps you build the right stack for your needs. Many organizations use platforms like Anaconda to manage their environments while leveraging multiple packages like Keras for model development and OpenCV for computer vision tasks.

Here are the top open source AI platforms and the essential packages they integrate.

Open Source AI Platforms

Anaconda

With more than 50 million users worldwide, Anaconda provides a comprehensive open source platform for Python that has become the industry standard for AI-native development.

Created to solve the complex dependency management challenges that plague ML workflows, Anaconda Core provides an enterprise-grade distribution where AI developers and data scientists can seamlessly work with thousands of packages and libraries. Anaconda Catalyst provides access to pre-validated, quantized models with built-in governance controls to accelerate deployment from months to days while maintaining security standards.

Key capabilities:

- Conda package manager for simplified installation and dependency resolution

- Pre-configured distribution with thousands of data science and ML packages

- Cross-platform support for Windows, Linux, and macOS

- Integrated development environments including JupyterLab and VS Code support

- Repository of curated, tested packages for compatibility and security

- Environment management for reproducible research and deployment

Anaconda serves as the foundation for end-to-end AI and data science workflows. Organizations use it to streamline model development across teams, ensure reproducibility from research to production, manage complex multi-library AI projects, and deploy AI applications at scale. It’s particularly valuable for enterprises that need to maintain security and compliance while giving data scientists flexibility. Teams can use best-in-class open source packages like PyTorch, TensorFlow, scikit-learn, and hundreds of specialized libraries, all managed through a single platform.

Whether you’re deploying on-premises, in AWS, or across hybrid environments, Anaconda simplifies package management and ensures consistent environments from development through production.

Hugging Face

Hugging Face has emerged as a hub for open source AI, hosting thousands of pre-trained models, datasets, and collaborative tools. What began as a simple AI chatbot has evolved into a comprehensive open source AI platform for discovering, sharing, and deploying machine learning models.

Key capabilities:

- Model Hub with over 2.5 million open source models available for download

- Datasets library providing access to thousands of curated datasets

- Inference API for testing models without local setup

- Spaces for deploying and sharing ML applications

- Collaborative tools for teams to work together on AI projects

- Integration with PyTorch, TensorFlow, and other major frameworks

Hugging Face can be used for natural language processing (NLP) tasks, computer vision applications, audio processing, and multimodal AI projects. Organizations use Hugging Face to accelerate development by starting with pre-trained models rather than training from scratch, dramatically reducing compute costs and development time.

Teams building chatbots, document analysis systems, content generation tools, and sentiment analysis applications rely on Hugging Face’s ecosystem for rapid prototyping and production deployment. The platform supports fine-tuning large language models like BERT, GPT, and LLaMA for specific tasks, making state-of-the-art AI accessible to organizations of all sizes.

OpenAI

OpenAI operates a proprietary platform that provides API access to its state-of-the-art AI models. The company also maintains several influential open source tools to support the research community. The OpenAI platform enables developers to integrate advanced AI capabilities into applications through paid APIs, while its open source tools have accelerated research in reinforcement learning and multimodal AI.

Platform APIs (proprietary):

- GPT models for text generation and analysis

- DALL-E API for image generation

- Whisper API for speech recognition

- Embeddings API for semantic search and similarity

- Fine-tuning capabilities for customizing models

Open source tools:

- Gym (reinforcement learning toolkit)

- CLIP (vision-language models)

- Whisper (speech recognition codebase)

The OpenAI platform serves organizations who want to build AI-powered applications without managing infrastructure, while OpenAI’s open source tools support researchers developing novel AI techniques.

It’s worth noting that OpenAI has a strategic partnership with Microsoft, which has invested over $13 billion in the company since 2019.

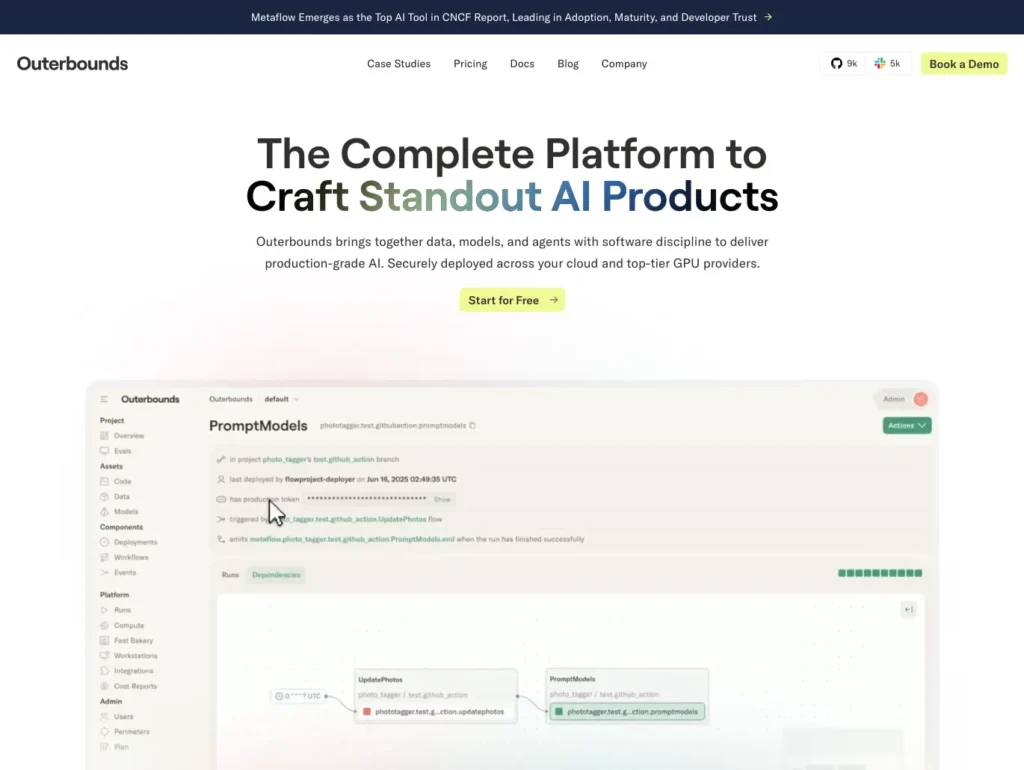

Outerbounds

Outerbounds is a platform for building and deploying production-grade AI systems, built around Metaflow, the open source workflow orchestration framework originally created at Netflix. Trusted by organizations including JP Morgan, Goldman Sachs, GSK, and Bosch, Outerbounds brings software engineering discipline to AI development, enabling teams to move from experimentation to reliable production systems without rebuilding their infrastructure.

Key capabilities:

- Metaflow-powered orchestration for batch workflows, AI agents, and inference

- Bring-your-own-cloud deployment that keeps all data and compute within your own cloud accounts

- Access to GPU resources from major providers including AWS, GCP, Azure, CoreWeave, and Nebius

- Built-in versioning and tracking for all code, data, and models by default

- SOC2 and HIPAA compliance for regulated industries

- Support for fine-tuning models and hosting inference endpoints on top-tier GPUs

Outerbounds’ bring-your-own-cloud model makes it particularly well-suited for organizations in regulated industries, such as finance, healthcare, and government, where data cannot leave the organization’s own environment. Outerbounds integrates with PyTorch, TensorFlow, and popular AI frameworks including LangChain, and works alongside Anaconda environments for teams that need consistent, reproducible development-to-production workflows.

Essential Open Source AI Packages

These software packages provide core capabilities for building AI models and can be integrated within many open source AI platforms.

PyTorch

Developed by Meta’s AI Research lab, PyTorch is one of the most popular deep learning frameworks among researchers and developers. Its Python-first design philosophy and dynamic computational graphs make it intuitive to use and debug, earning it widespread adoption in both academic research and production environments.

Key capabilities:

- Dynamic computation graphs that allow for flexible model architectures

- Strong GPU acceleration support for faster training

- Extensive library ecosystem including TorchVision for computer vision and TorchText for NLP

- Native support for distributed training across multiple nodes

- Strong integration with Python’s scientific computing stack

PyTorch is used for research and experimentation where model architectures change frequently. It’s ideal for computer vision applications, natural language processing projects, GenAI development, and reinforcement learning. Many organizations use PyTorch for rapid prototyping and fine-tuning pre-trained models before moving them to production. PyTorch integrates well with cloud platforms, including AWS, and can deploy on-premises and in hybrid environments. It’s available through Anaconda’s distribution and powers many of the models on Hugging Face.

TensorFlow

Created by Google Brain, TensorFlow is one of the most comprehensive machine learning frameworks available. Its production-ready ecosystem includes tools for every stage of the ML lifecycle, from data preparation through deployment at scale.

Key capabilities:

- TensorFlow Serving for deploying models in production environments

- TensorFlow Lite for mobile and embedded device deployment

- Keras integration providing a high-level API for rapid development

- TensorBoard for visualization of training metrics and model graphs

- Robust support for distributed training and TPU acceleration

TensorFlow is used for large-scale production deployments. Organizations use it for image classification, speech recognition, time series forecasting, and recommendation systems. Its mobile deployment capabilities make it popular for on-device AI apps in smartphones and IoT devices. TensorFlow works seamlessly with major cloud providers, including AWS, and supports distributed training across multiple nodes. It integrates with platforms like Anaconda for environment management and Hugging Face for model sharing.

Keras

While now integrated as TensorFlow’s official high-level API, Keras maintains its identity as a user-friendly deep learning framework designed for fast experimentation. Its design principle of being “simple, consistent, and extensible” has made it a favorite among both beginners and experienced practitioners.

Key capabilities:

- Intuitive API that emphasizes readability and ease of use

- Modular architecture allowing easy construction of neural networks

- Support for multiple backend engines (primarily TensorFlow)

- Extensive pre-trained models available through Keras Applications

- Built-in support for common layer types and training configurations

Keras is used for rapid prototyping and educational purposes. Data scientists use it for image classification, text analysis, time series prediction, and transfer learning projects. Its accessibility makes it ideal for teams transitioning into deep learning or organizations that need to quickly validate AI concepts through fine-tuning existing models. Keras is available through Anaconda, and its models can be easily shared on Hugging Face and deployed using the same infrastructure as TensorFlow.

OpenCV

The Open Source Computer Vision Library (OpenCV) has been the cornerstone of computer vision development for over two decades. Originally developed by Intel, this extensive computer vision library provides over 2,500 optimized algorithms for image and video analysis.

Key capabilities:

- Comprehensive collection of classical and modern computer vision algorithms

- Real-time processing capabilities for video streams

- Cross-platform support including Windows, Linux, macOS, iOS, and Android

- Bindings for Python, C++, Java, and other languages

- Integration with deep learning frameworks like PyTorch and TensorFlow

OpenCV is used to power machine learning models and applications in facial recognition, object detection, motion tracking, augmented reality, and medical image analysis. It’s widely used in robotics for vision-based navigation, in retail for customer analytics, and in manufacturing for quality-control inspections. OpenCV integrates seamlessly with PyTorch and TensorFlow for hybrid approaches that combine classical computer vision with deep learning, and it’s readily available through the Anaconda distribution.

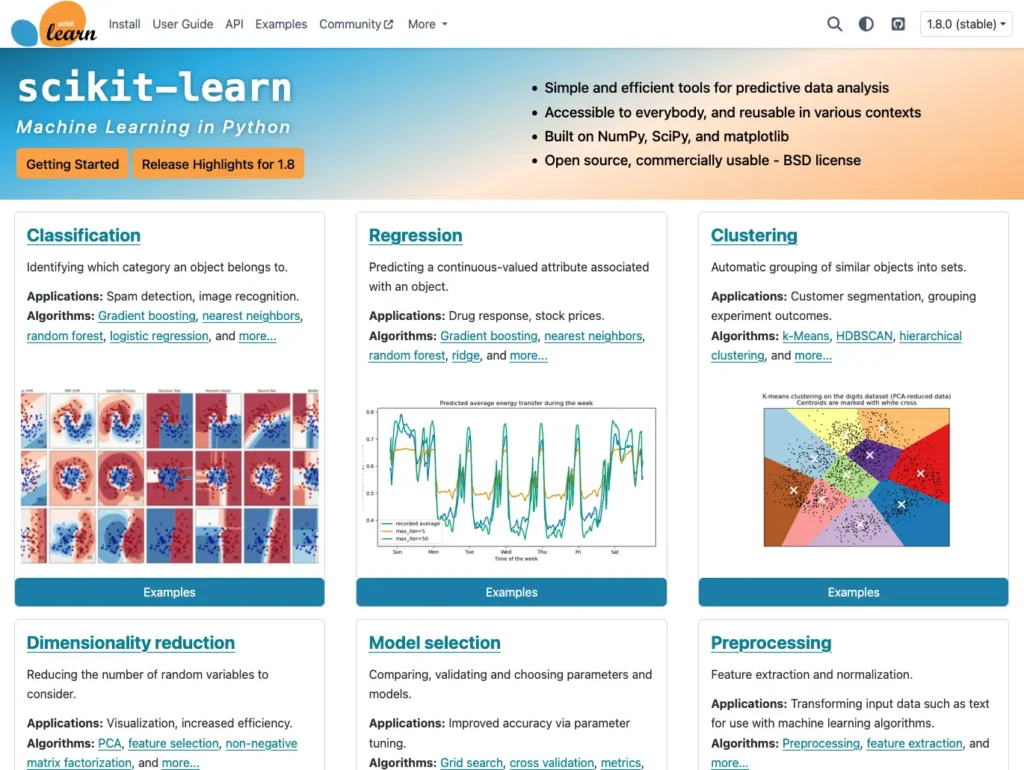

Scikit-learn

Scikit-learn is one of the most widely used Python libraries for machine learning, providing a consistent, well-documented interface for classical ML algorithms. Built on NumPy, SciPy, and Matplotlib, it has become the standard toolkit for data scientists working on predictive modeling, classification, regression, and clustering tasks.

Key capabilities:

- Comprehensive collection of supervised and unsupervised learning algorithms

- Consistent API design that makes switching between algorithms straightforward

- Built-in tools for model evaluation, selection, and hyperparameter tuning

- Preprocessing utilities for feature engineering and data transformation

- Pipeline tools for chaining preprocessing and modeling steps reproducibly

- Available through Anaconda and compatible with PyTorch and TensorFlow workflows

Scikit-learn is used across virtually every industry for predictive analytics, customer segmentation, anomaly detection, and feature engineering. Data scientists rely on it for exploratory modeling and benchmarking before graduating to deep learning frameworks for more complex tasks. Its consistent API design makes it exceptionally accessible for teams building their first models, while its breadth of algorithms and utilities keeps it relevant for experienced practitioners. Scikit-learn integrates naturally into end-to-end workflows managed through Anaconda and pairs well with MLflow for experiment tracking.

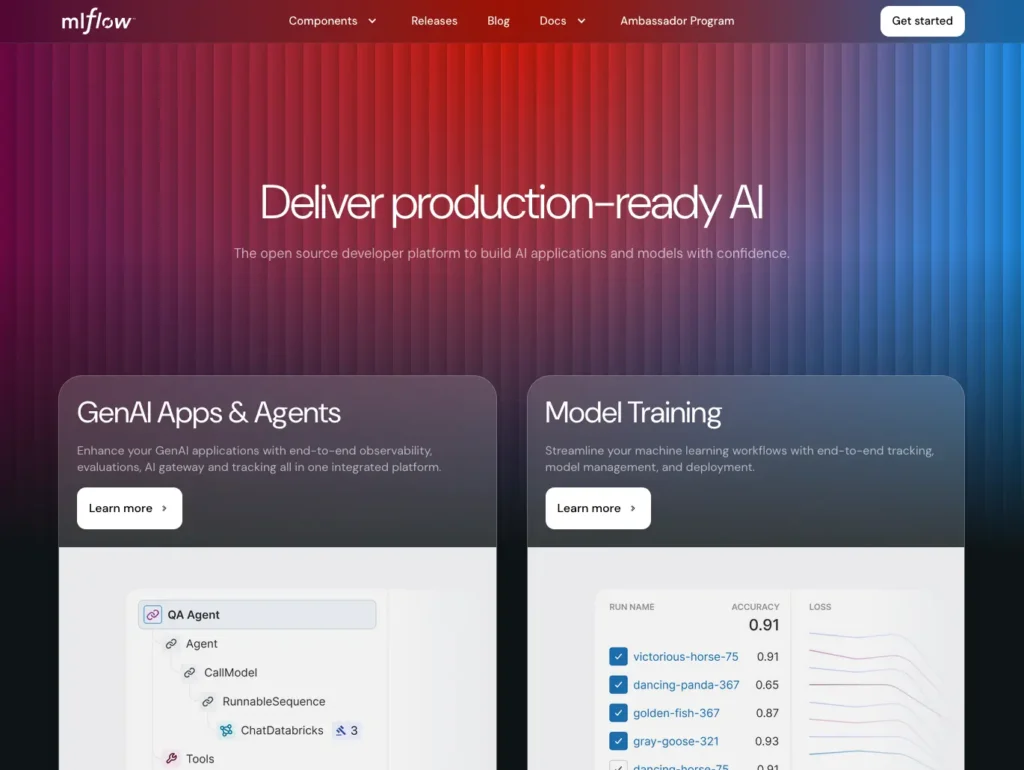

MLflow

MLflow is an open source platform for managing the end-to-end machine learning lifecycle. Developed by Databricks, it addresses one of the most persistent challenges in ML development: keeping track of experiments, reproducing results, and managing models from development through production deployment.

Key capabilities:

- Experiment tracking for logging parameters, metrics, and artifacts across runs

- Model registry for versioning, staging, and managing models in production

- MLflow Projects for packaging and reproducing ML code across environments

- Framework-agnostic design supporting PyTorch, TensorFlow, scikit-learn, and others

- REST API and UI for querying and visualizing experiment results

- Available through Anaconda and integrates with major cloud platforms including AWS

MLflow is used by data science teams that need operational discipline across multiple experiments and model iterations. Organizations use it to compare model performance across runs, manage the transition from experimentation to production, and maintain audit trails for compliance purposes. Its framework-agnostic design makes it a natural fit for teams that use multiple open source packages—tracking experiments in PyTorch, scikit-learn, and TensorFlow within a single, unified system. MLflow integrates seamlessly with Anaconda environments, ensuring reproducibility from development through deployment.

LangChain

LangChain is an open source framework designed to simplify the development of applications powered by large language models. It provides standardized interfaces and composable components for connecting LLMs with external data sources, APIs, and tools—enabling developers to build sophisticated AI applications that go beyond simple text generation.

Key capabilities:

- Chains for composing sequences of LLM calls and data transformations

- Retrieval-augmented generation (RAG) tools for grounding LLMs in your own data

- Agent frameworks for building systems that can reason and take actions autonomously

- Integrations with hundreds of data sources, vector databases, and external APIs

- Support for multiple LLM providers and open source models

- Compatible with Anaconda environments and deployable on-premises or in AWS

LangChain is used for building document question-answering systems, AI-powered customer service agents, code generation tools, and data analysis assistants. Organizations use it to connect LLMs to proprietary data sources through retrieval-augmented generation, enabling AI systems that answer questions grounded in internal knowledge bases rather than general training data alone. Development teams building enterprise AI applications rely on LangChain to manage the complexity of multi-step LLM workflows, tool integrations, and memory management. It works alongside Hugging Face models and OpenAI APIs, giving developers flexibility in their choice of underlying models.

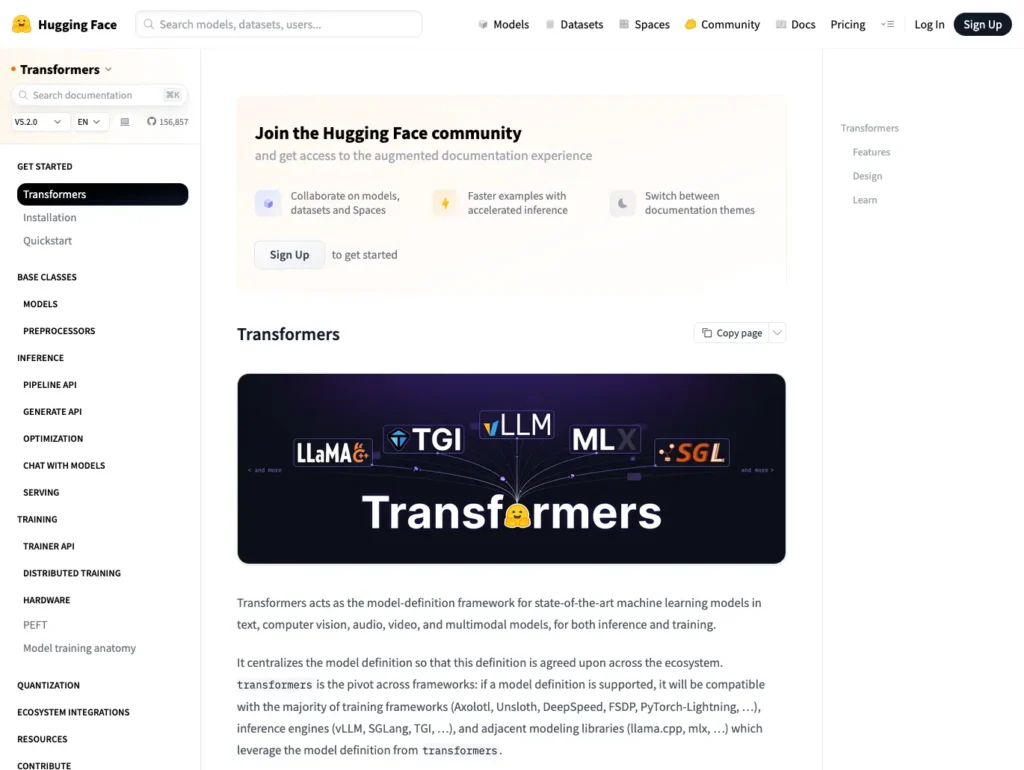

Transformers

The Transformers library, developed and maintained by Hugging Face, provides access to thousands of pre-trained models for natural language processing, computer vision, audio processing, and multimodal tasks. It has become the foundational Python library for working with modern deep learning architectures, including BERT, GPT, LLaMA, and Whisper.

Key capabilities:

- Access to over 500,000 pre-trained models through the Hugging Face Model Hub

- Consistent API for inference, fine-tuning, and training across model architectures

- Support for PyTorch, TensorFlow, and JAX backends

- Pipelines for common tasks including text classification, summarization, and translation

- Tools for efficient fine-tuning including LoRA and other parameter-efficient methods

- Available through Anaconda and deployable on-premises or in cloud environments including AWS

Transformers is used across virtually every AI application involving language, vision, or audio. Data scientists use it to quickly prototype NLP solutions by leveraging pre-trained models rather than training from scratch. Organizations fine-tune models from the Hugging Face Hub on domain-specific data to build custom solutions for document analysis, sentiment classification, named entity recognition, and content generation. The library’s broad framework support means it integrates naturally into existing workflows, whether teams are working in PyTorch, TensorFlow, or managed through Anaconda environments.

Instructor

Instructor is an open source Python library that simplifies extracting structured, validated data from large language models. By combining LLM capabilities with Pydantic data validation, Instructor enables developers to reliably get predictable, typed outputs from models that would otherwise return unstructured text, making LLMs practical for production applications that require consistent data formats.

Key capabilities:

- Structured output extraction from LLMs using Pydantic models

- Automatic validation and retry logic for ensuring output quality

- Support for multiple LLM providers including OpenAI, Anthropic, and open source models

- Streaming support for real-time structured data extraction

- Partial validation for handling incomplete or streaming responses

- Lightweight integration compatible with existing Python and Anaconda environments

Instructor is used by engineering teams building production AI applications that require reliable, structured outputs for tasks such as extracting entities from documents, classifying customer inquiries, parsing unstructured data into database-ready formats, and generating structured reports from free-form text. Its validation and retry logic addresses one of the most common failure modes in LLM applications: outputs that don’t conform to expected formats. Teams building data pipelines that incorporate LLMs find Instructor essential for bridging the gap between probabilistic model outputs and the deterministic data structures their downstream systems require.

Ollama

Ollama is an open source tool that makes it straightforward to run large language models locally on your own hardware. By packaging models with their dependencies and providing a simple API, Ollama removes the complexity of setting up and managing LLM inference infrastructure, enabling developers and organizations to run powerful open source models without sending data to external services.

Key capabilities:

- Simple installation and model management for popular open source LLMs including LLaMA, Mistral, and Gemma

- Local REST API compatible with OpenAI’s API format for easy integration

- Support for running models on CPUs and GPUs across macOS, Linux, and Windows

- Model customization through Modelfiles for system prompts and parameter tuning

- Integration with LangChain, LlamaIndex, and other LLM application frameworks

- On-premises deployment for air-gapped or data-sensitive environments

Ollama is used by developers who need to prototype and test LLM applications without incurring API costs or sending proprietary data to third-party services. Organizations in regulated industries, including healthcare, finance, and government, rely on Ollama to run AI capabilities entirely within their own infrastructure, satisfying data privacy and compliance requirements. Development teams use it to build and test LangChain applications locally before deploying against hosted models in production. Ollama’s compatibility with Anaconda environments makes it straightforward to incorporate local LLM inference into existing data science and AI workflows.

Key Considerations When Choosing an Open Source AI Platform

To choose the right open source AI platform for your organization, start by examining the problems you’re solving, your team’s expertise, and your infrastructure constraints. Once you have a clear understanding of these aspects of your situation, you can begin to sketch out the best tech stack for your AI applications.

1. Automation

Modern AI development involves numerous repetitive tasks, such as data preprocessing, hyperparameter tuning, model evaluation, and deployment. Tools with strong automation capabilities can handle these tasks with minimal manual intervention and help your team work more productively.

Automation is critical as you scale from experimental models to production systems serving real users. Automated retraining pipelines ensure your models stay current with new data. Automated monitoring detects performance degradation before it impacts your business. These capabilities transform AI from a research project into a reliable operational system.

2. Integration Options

AI platforms rarely work in isolation. They need to connect with your data warehouses, business intelligence tools, cloud infrastructure, CI/CD (continuous integration and continuous delivery/deployment) pipelines, and monitoring systems. A platform with robust integration options fits naturally into your existing technology stack rather than forcing you to rebuild around it.

Look for platforms that support standard APIs, popular data formats, and common deployment targets. The ability to integrate with Kubernetes, Docker, major cloud providers like AWS, and data processing frameworks like Apache Spark can significantly accelerate your time to production. Consider whether you need on-premises deployment, cloud deployment, or hybrid options. Seamless integration also means your team spends less time on plumbing and more time on innovation.

3. Ease of Implementation

The best AI platform for your organization is one your team will actually use. Steep learning curves and complex setup processes can stall AI initiatives before they produce value. Platforms that prioritize developer experience, with clear documentation, intuitive APIs, and sensible defaults, allow teams to move quickly from concept to working prototype.

Easy implementation doesn’t mean sacrificing power. The most effective platforms provide simple interfaces for common tasks while still exposing advanced functionality for specialized needs. This approach lets beginners be productive immediately while giving experienced practitioners the control they need.

4. Scalability

AI models that work beautifully on a laptop with sample data can fail dramatically when deployed against production datasets. Scalability encompasses both technical performance (handling growing data volumes and request loads) and operational maturity like monitoring, logging, and graceful degradation.

Consider how the platform handles distributed training across multiple nodes and whether it can scale horizontally as your needs grow. Security considerations become paramount at scale. Can your platform handle sensitive data appropriately? Does it support encryption, access controls, and audit logging? As your AI systems become more central to business operations, the ability to scale securely prevents success from becoming a liability.

5. Vendor Lock-in

Make every effort to ensure that workflows you develop in the platform will be portable outside of it, even if that portability is inconvenient. Many successful organizations use multiple platforms and choose the best tool for each use case, rather than forcing everything through a single solution.

It’s important to note that locking into proprietary models (e.g., OpenAI ChatGPT) can present problems because replacing them when business or technical requirements change will affect your results across all applications using the model.

Build Your Open Source AI Platform With Anaconda

While individual open source packages like PyTorch and TensorFlow are powerful, and platforms like Hugging Face provide valuable model repositories, most organizations need an integrated environment that brings everything together seamlessly.

Anaconda eliminates the complexity of managing dependencies across multiple packages and libraries. Our conda package manager ensures that PyTorch, TensorFlow, OpenCV, and hundreds of other packages work together without conflicts. Through Anaconda’s curated repository, you get tested, secure versions of every tool you need, from foundational frameworks to specialized libraries.

The platform provides more than just package management. With integrated development environments like JupyterLab, you can experiment with models from Hugging Face, train with PyTorch or TensorFlow, and process images with OpenCV—all within a unified workspace. Environment management ensures that what works on your laptop will work in production, eliminating the “it works on my machine” problem that plagues many data science teams.

Ready to Get Started?

Anaconda provides an integrated environment where open source AI platforms and packages can seamlessly work together. Our conda package manager eliminates dependency conflicts and complex setup processes, letting your team focus on building AI solutions rather than fighting with installations. Through Anaconda Cloud, you can easily share environments, collaborate with team members, and deploy models with confidence.

Whether you’re taking your first steps in AI development or scaling sophisticated production systems, Anaconda Learning offers courses and resources to help your team master these tools. From foundational concepts to advanced techniques, our learning platform accelerates your journey from experimentation to deployment.

Explore what’s possible with open source AI platforms using Anaconda.