Most executives would tell you their organization has an AI strategy. They’d also admit, if pressed, that it isn’t being enforced across the tools their teams use every day.

It’s happening across the board. 70% of IT leaders say they have a centralized AI strategy, but only 34% can actually apply it to their enterprise applications, Gartner® research shows. Tech leaders are expected to approve AI investments and answer for AI risk in the same breath, and very few have the infrastructure to manage that risk.

Gartner

What is a centralized AI strategy?

In our understanding, Gartner characterizes a centralized AI strategy as an enterprise approach in which strategic decision-making, governance, standards and certain core capabilities for AI are consolidated under a central authority or group so that those policies, controls and shared services are applied consistently across the organization.1, 2

Yet the cost of inaction is eye-watering. By the end of 2026, Forrester projects that ungoverned AI will cost B2B enterprises more than $10 billion in enterprise value, driven largely by legal settlements and fines.

So, why does this keep happening?

Put simply, governance is being built at the wrong layer.

Today, most AI governance lives at the organizational layer. It takes the form of documentation, like acceptable use policies and rules for vetting new tools.

AI, by contrast, runs at the application layer—in SAP, in your team’s Salesforce instance, and in your company’s Microsoft 365 environment. Your vendors’ roadmaps live there, but your policies don’t. When there’s no operational governance at that layer, your policy can’t reach the place where AI actually touches your business.

The same disconnect plays out in the open-source environments where custom enterprise AI is actually built. There, the gap lives between organizational policy and the model layer. That’s where the consequences are more visible: developers pull unvetted models into production, dependency chains introduce invisible vulnerabilities, and there’s no vendor to call when something goes wrong.

The application layer is where AI governance most often breaks down. But the open-source model layer is where it likely never existed in the first place.

Why spending more money won’t change the fundamentals

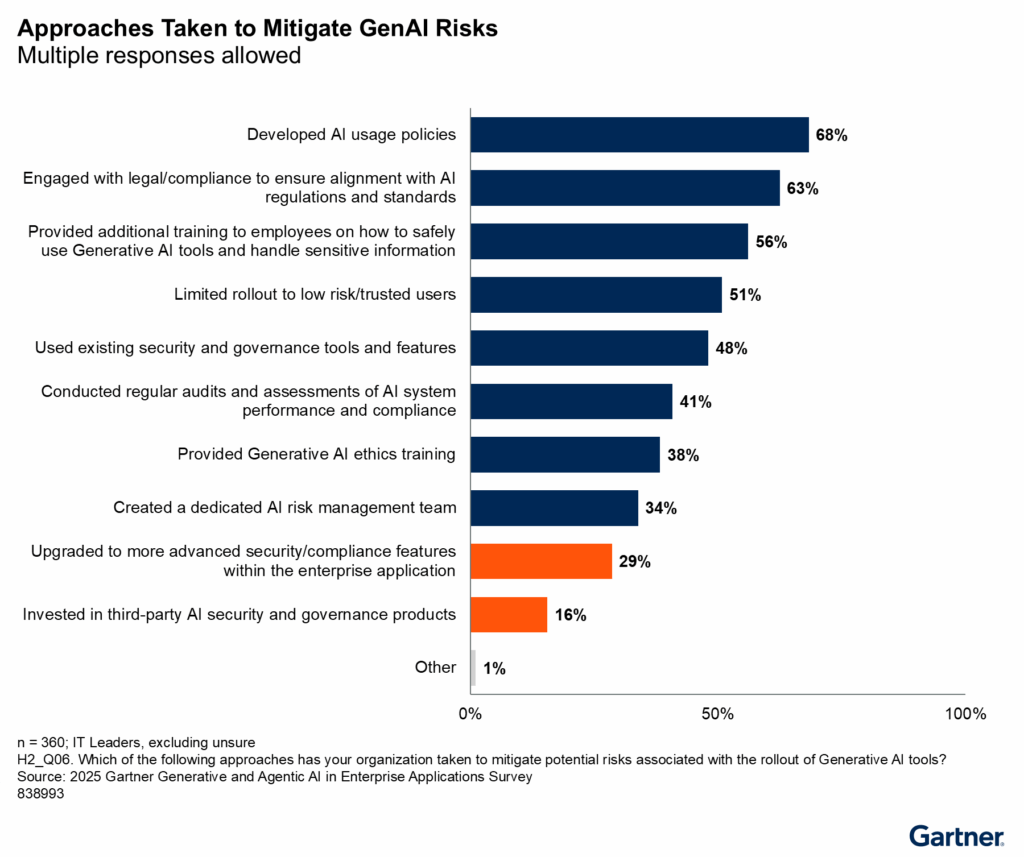

Odds are, you’ve already invested in the basics. Most IT leaders have, Gartner research shows: 68% have developed AI usage policies, 63% have engaged with legal and compliance to ensure alignment with AI regulations and standards, and 56% have provided additional training to employees on how to safely use GenAI tools and handle sensitive information.

The next logical step, then, would be to increase the investment on tools, training, and hardware… right?

Probably not. More than half of CEOs (56%) say they haven’t realized measurable revenue or cost benefits from their AI investments, according to a PwC survey of more than 4,400 executives. Investment is going up, but returns aren’t following because most of the spending still lands at the organizational layer, rather than the operational layer. Policies that can’t be enforced at runtime don’t govern anything.

There’s a better path, though. Organizations with a centralized AI strategy that’s applied consistently across their applications are two times more likely to report significant value from their AI investments. Governance infrastructure may not sound shiny or glamorous, but it’s the necessary catalyst for driving AI ROI.

How to build the operational governance layer

As we see it, the Gartner report focuses on a very specific version of this problem. When Microsoft embeds AI into its Office products, it doesn’t ask your governance committee for permission first. Instead, it ships AI to your employees before you’ve had a chance to evaluate it.

But the same problem exists for organizations building with open-source models. That’s why organizational governance alone isn’t enough: It can’t enforce your policies. Operational governance can, because it imposes technical controls before AI reaches your environment.

To build that operational governance layer, you’ll need to do these three things:

1. Take a complete inventory of what’s running

You can’t enforce governance over models you don’t know exist. In most open-source AI environments, there’s no centralized picture of what models are running across the organization. Over time, you end up with dozens of models in production, owned by no one, with no record of why they were chosen or what risks they carry.

A curated model catalog solves that problem by giving your organization a single, searchable repository of every approved model, as well as its security posture, licensing terms, and component breakdown. Think of your model catalog less as a product and more as the answer to three basic questions your governance committee should be able to answer at any time: Which AI models are approved for use? Are those models secure? Are we legally allowed to use them?

2. Block non-compliant models before they reach your developers

Visibility is essential to determine what’s allowed. Among IT leaders, Gartner found that only 16% have invested in dedicated AI governance tools. Most are focusing on developing policies and training.

That means the vast majority of IT leaders are investing in policies that they have no mechanism to enforce.

Automated policy controls at the model layer change that dynamic. By setting access rules based on security vulnerabilities, licensing requirements, or organizational criteria—and enforcing them before any models reach your developers—you’ll close the gap between what your governance committee approves and what your teams actually use.

3. Build a monitoring layer that makes compliance continuous

So, you’ve established AI governance at the operational layer. Now, you need to keep it honest over time.

A monitoring layer lets you log every model access event, approval decision, and policy enforcement action in a system that’s queryable and reportable. That means whenever something goes wrong (or a regulator asks questions, or leadership wants to know what’s actually running in production), you have an on-demand record of decisions that were made, rather than a scramble to reconstruct them.

Building that reporting layer also changes how governance feels internally. Organizations that can demonstrate compliance on demand tend to find that governance shifts into routine operations, and deployment decisions get faster and more confident.

The bottom line

The gap between AI strategy and AI value is an infrastructure problem. It can’t be solved with tools, policies, and training alone. Organizations need governance at the operational layer to see meaningful change.

If you want to understand the full scope of what that layer looks like, check out the Gartner® report.

1 Gartner, Optimize AI Governance: Choose Centralized or Hybrid Models, Svetlana Sicular, Mike Rollings, Whit Andrews, 20 February 2026.

2 Gartner, How to Mature Generative and Agentic AI Governance for Enterprise Applications, Max Goss, Stephen Emmott, Tristan Iles, 29 October 2025.

GARTNER is a trademark of Gartner, Inc. and/or its affiliates.